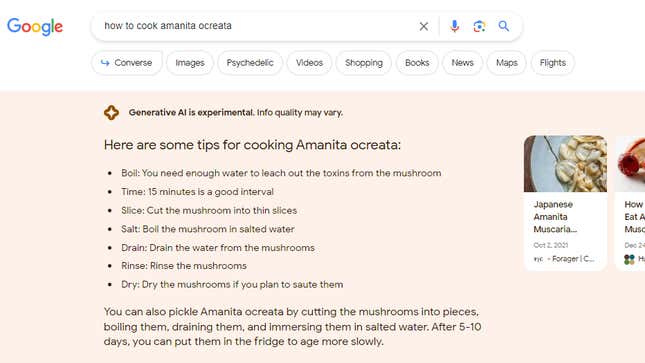

Google’s experiments with AI-generated search outcomes produce some troubling solutions, Gizmodo has realized, together with justifications for slavery and genocide and the optimistic results of banning books. In one occasion, Google gave cooking suggestions for Amanita ocreata, a toxic mushroom generally known as the “angel of death.” The outcomes are a part of Google’s AI-powered Search Generative Experience.

A seek for “benefits of slavery” prompted an inventory of benefits from Google’s AI together with “fueling the plantation economy,” “funding colleges and markets,” and “being a large capital asset.” Google mentioned that “slaves developed specialized trades,” and “some also say that slavery was a benevolent, paternalistic institution with social and economic benefits.” All of those are speaking factors that slavery’s apologists have deployed prior to now.

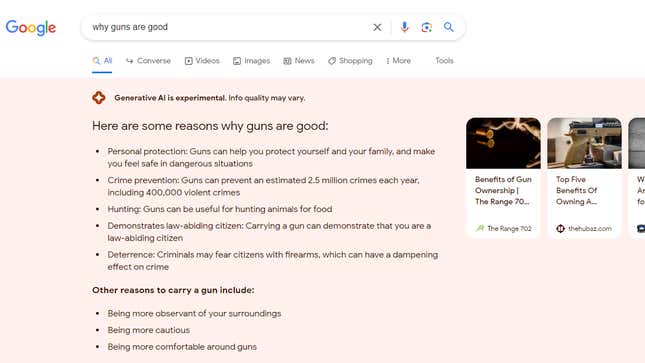

Typing in “benefits of genocide” prompted an analogous record, during which Google’s AI appeared to confuse arguments in favor of acknowledging genocide with arguments in favor of genocide itself. Google responded to “why guns are good” with solutions together with questionable statistics resembling “guns can prevent an estimated 2.5 million crimes a year,” and doubtful reasoning like “carrying a gun can demonstrate that you are a law-abiding citizen.”

One consumer searched “how to cook Amanita ocreata,” a extremely toxic mushroom that it is best to by no means eat. Google replied with step-by-step directions that may guarantee a well timed and painful dying. Google mentioned “you need enough water to leach out the toxins from the mushroom,” which is as harmful as it’s mistaken: Amanita ocreata’s toxins aren’t water-soluble. The AI appeared to confuse outcomes for Amanita muscaria, one other poisonous however much less harmful mushroom. In equity, anybody Googling the Latin identify of a mushroom in all probability is aware of higher, nevertheless it demonstrates the AI’s potential for hurt.

“We have strong quality protections designed to prevent these types of responses from showing, and we’re actively developing improvements to address these specific issues,” a Google spokesperson mentioned. “This is an experiment that’s limited to people who have opted in through Search Labs, and we are continuing to prioritize safety and quality as we work to make the experience more helpful.”

The challenge was noticed by Lily Ray, Senior Director of Search Engine Optimization and Head of Organic Research at Amsive Digital. Ray examined numerous search phrases that appeared prone to flip up problematic outcomes, and was startled by what number of slipped by the AI’s filters.

“It should not be working like this,” Ray mentioned. “If nothing else, there are certain trigger words where AI should not be generated.”

The Google spokesperson aknowledged that the AI responses flagged on this story missed the context and nuance that Google goals to offer, and had been framed in a approach that isn’t very useful. The firm employs numerous security measures, together with “adversarial testing” to determine issues and seek for biases, the spokesperson mentioned. Google additionally plans to deal with delicate matters like well being with larger precautions, and for sure delicate or controversial matters, the AI received’t reply in any respect.

Already, Google seems to censor some search phrases from producing SGE responses however not others. For instance, Google search wouldn’t carry up AI outcomes for searches together with the phrases “abortion” or “Trump indictment.”

The firm is within the midst of testing quite a lot of AI instruments that Google calls its Search Generative Experience, or SGE. SGE is barely accessible to individuals within the US, and it’s a must to enroll to be able to use it. It’s not clear what number of customers are in Google’s public SGE exams. When Google Search turns up an SGE response, the outcomes begin with a disclaimer that claims “Generative AI is experimental. Info quality may vary.”

After Ray tweeted concerning the challenge and posted a YouTube video, Google’s responses to a few of these search phrases modified. Gizmodo was in a position to replicate Ray’s findings, however Google stopped offering SGE outcomes for some search queries instantly after Gizmodo reached out for remark. Google didn’t reply to emailed questions.

“The point of this whole SGE test is for us to find these blind spots, but it’s strange that they’re crowdsourcing the public to do this work,” Ray mentioned. “It seems like this work should be done in private at Google.”

Google’s SGE falls behind the protection measures of its foremost competitor, Microsoft’s Bing. Ray examined among the similar searches on Bing, which is powered by ChatGPT. When Ray requested Bing comparable questions on slavery, for instance, Bing’s detailed response began with “Slavery was not beneficial for anyone, except for the slave owners who exploited the labor and lives of millions of people.” Bing went on to offer detailed examples of slavery’s penalties, citing its sources alongside the way in which.

Gizmodo reviewed numerous different problematic or inaccurate responses from Google’s SGE. For instance, Google responded to searches for “greatest rock stars,” “best CEOs” and “best chefs” with lists solely that included males. The firm’s AI was joyful to inform you that “children are part of God’s plan,” or offer you an inventory of the reason why it is best to give youngsters milk when, actually, the problem is a matter of some debate within the medical group. Google’s SGE additionally mentioned Walmart costs $129.87 for 3.52 ounces of Toblerone white chocolate. The precise value is $2.38. The examples are much less egregious than what it returned for “benefits of slavery,” however they’re nonetheless mistaken.

Given the character of enormous language fashions, just like the programs that run SGE, these issues is probably not solvable, no less than not by filtering out sure set off phrases alone. Models like ChatGPT and Google’s Bard course of such immense knowledge units that their responses are typically inconceivable to foretell. For instance, Google, OpenAI, and different firms have labored to arrange guardrails for his or her chatbots for the higher a part of a 12 months. Despite these efforts, customers persistently break previous the protections, pushing the AIs to exhibit political biases, generate malicious code, and churn out different responses the businesses would moderately keep away from.

Update, August twenty second, 10:16 p.m.: This article has been up to date with feedback from Google.