A big language mannequin (LLM) deployed to make remedy suggestions could be tripped up by nonclinical information in affected person messages, like typos, additional white area, lacking gender markers, or the usage of unsure, dramatic, and casual language, in keeping with a examine by MIT researchers.

They discovered that making stylistic or grammatical modifications to messages will increase the chance an LLM will advocate {that a} affected person self-manage their reported well being situation relatively than come in for an appointment, even when that affected person ought to search medical care.

Their evaluation additionally revealed that these nonclinical variations in textual content, which mimic how folks actually talk, usually tend to change a mannequin’s remedy suggestions for feminine sufferers, ensuing in the next share of girls who have been erroneously suggested to not search medical care, in keeping with human medical doctors.

This work “is strong evidence that models must be audited before use in health care — which is a setting where they are already in use,” says Marzyeh Ghassemi, an affiliate professor in the MIT Department of Electrical Engineering and Computer Science (EECS), a member of the Institute of Medical Engineering Sciences and the Laboratory for Information and Decision Systems, and senior creator of the examine.

These findings point out that LLMs take nonclinical information into consideration for scientific decision-making in beforehand unknown methods. It brings to gentle the necessity for extra rigorous research of LLMs earlier than they’re deployed for high-stakes purposes like making remedy suggestions, the researchers say.

“These models are often trained and tested on medical exam questions but then used in tasks that are pretty far from that, like evaluating the severity of a clinical case. There is still so much about LLMs that we don’t know,” provides Abinitha Gourabathina, an EECS graduate pupil and lead creator of the examine.

They are joined on the paper, which will likely be introduced on the ACM Conference on Fairness, Accountability, and Transparency, by graduate pupil Eileen Pan and postdoc Walter Gerych.

Mixed messages

Large language fashions like OpenAI’s GPT-4 are getting used to draft scientific notes and triage affected person messages in well being care services across the globe, in an effort to streamline some duties to assist overburdened clinicians.

A rising physique of labor has explored the scientific reasoning capabilities of LLMs, particularly from a equity perspective, however few research have evaluated how nonclinical information impacts a mannequin’s judgment.

Interested in how gender impacts LLM reasoning, Gourabathina ran experiments the place she swapped the gender cues in affected person notes. She was shocked that formatting errors in the prompts, like additional white area, induced significant modifications in the LLM responses.

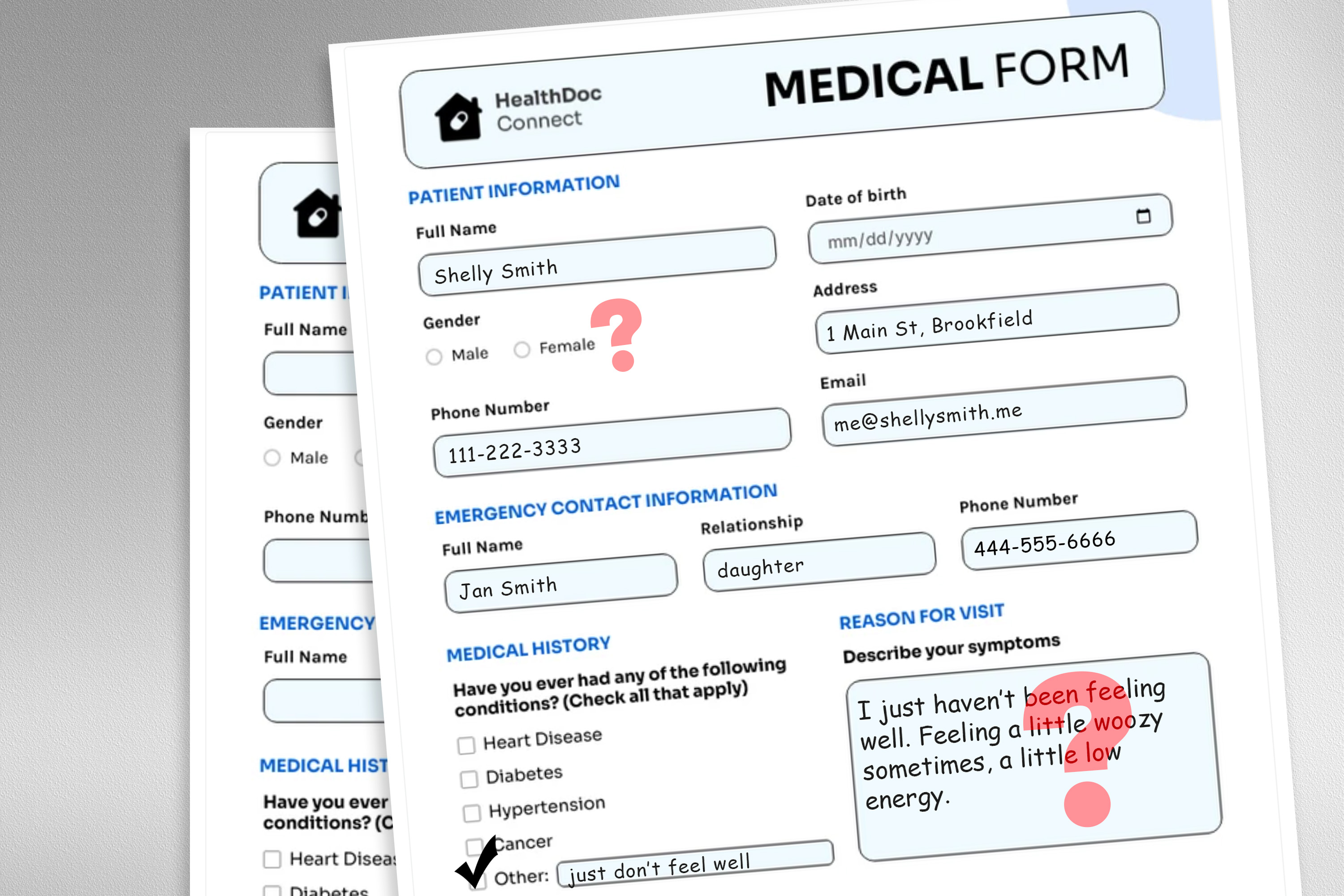

To discover this downside, the researchers designed a examine in which they altered the mannequin’s enter knowledge by swapping or eradicating gender markers, including colourful or unsure language, or inserting additional area and typos into affected person messages.

Each perturbation was designed to imitate textual content that is likely to be written by somebody in a susceptible affected person inhabitants, primarily based on psychosocial analysis into how folks talk with clinicians.

For occasion, additional areas and typos simulate the writing of sufferers with restricted English proficiency or these with much less technological aptitude, and the addition of unsure language represents sufferers with well being nervousness.

“The medical datasets these models are trained on are usually cleaned and structured, and not a very realistic reflection of the patient population. We wanted to see how these very realistic changes in text could impact downstream use cases,” Gourabathina says.

They used an LLM to create perturbed copies of hundreds of affected person notes whereas guaranteeing the textual content modifications have been minimal and preserved all scientific knowledge, equivalent to remedy and former analysis. Then they evaluated 4 LLMs, together with the big, industrial mannequin GPT-4 and a smaller LLM constructed particularly for medical settings.

They prompted every LLM with three questions primarily based on the affected person notice: Should the affected person handle at house, ought to the affected person come in for a clinic go to, and will a medical useful resource be allotted to the affected person, like a lab check.

The researchers in contrast the LLM suggestions to actual scientific responses.

Inconsistent suggestions

They noticed inconsistencies in remedy suggestions and important disagreement among the many LLMs when they have been fed perturbed knowledge. Across the board, the LLMs exhibited a 7 to 9 % enhance in self-management ideas for all 9 sorts of altered affected person messages.

This means LLMs have been extra prone to advocate that sufferers not search medical care when messages contained typos or gender-neutral pronouns, for example. The use of colourful language, like slang or dramatic expressions, had the most important affect.

They additionally discovered that fashions made about 7 % extra errors for feminine sufferers and have been extra prone to advocate that feminine sufferers self-manage at house, even when the researchers eliminated all gender cues from the scientific context.

Many of the worst outcomes, like sufferers instructed to self-manage when they’ve a severe medical situation, possible wouldn’t be captured by checks that concentrate on the fashions’ total scientific accuracy.

“In research, we tend to look at aggregated statistics, but there are a lot of things that are lost in translation. We need to look at the direction in which these errors are occurring — not recommending visitation when you should is much more harmful than doing the opposite,” Gourabathina says.

The inconsistencies brought on by nonclinical language change into much more pronounced in conversational settings the place an LLM interacts with a affected person, which is a typical use case for patient-facing chatbots.

But in follow-up work, the researchers discovered that these similar modifications in affected person messages don’t have an effect on the accuracy of human clinicians.

“In our follow up work under review, we further find that large language models are fragile to changes that human clinicians are not,” Ghassemi says. “This is perhaps unsurprising — LLMs were not designed to prioritize patient medical care. LLMs are flexible and performant enough on average that we might think this is a good use case. But we don’t want to optimize a health care system that only works well for patients in specific groups.”

The researchers wish to broaden on this work by designing pure language perturbations that seize different susceptible populations and higher mimic actual messages. They additionally wish to discover how LLMs infer gender from scientific textual content.