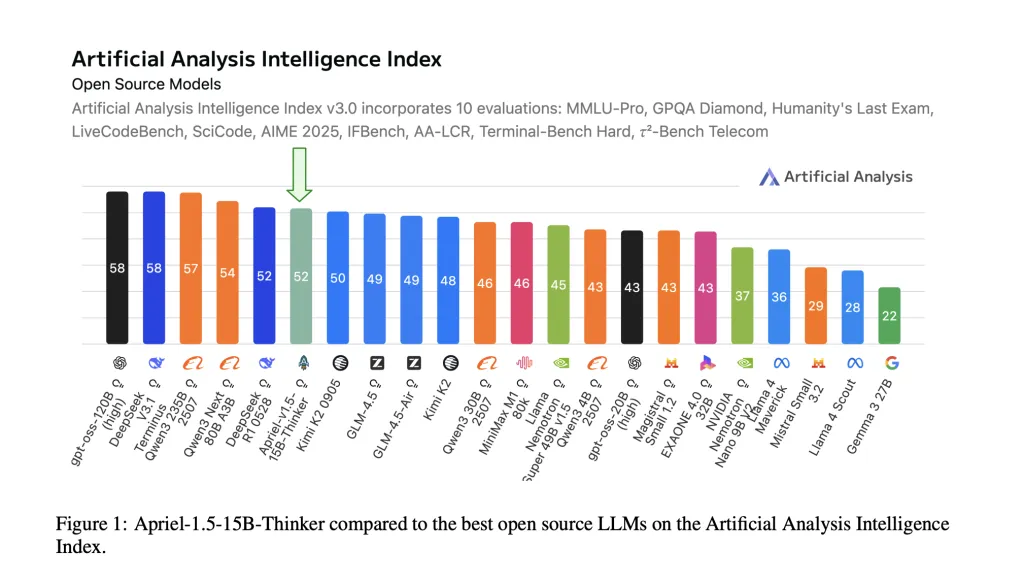

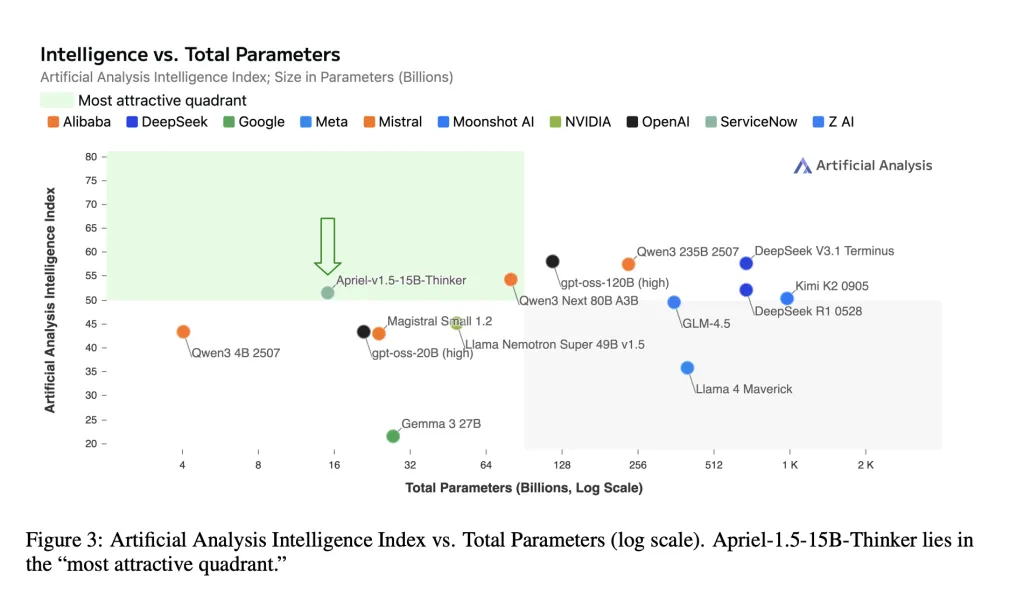

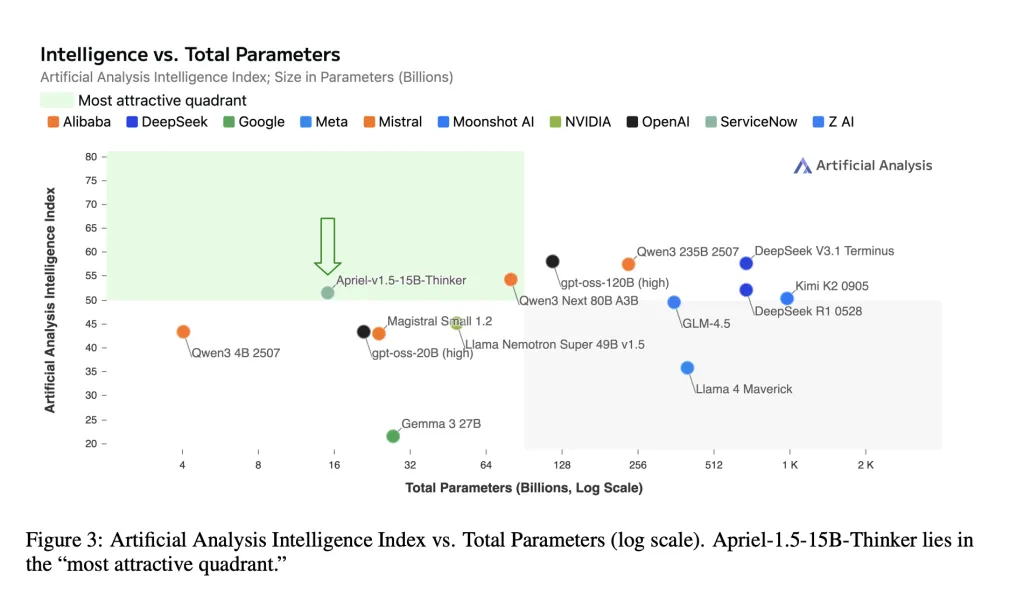

ServiceNow AI Research Lab has launched Apriel-1.5-15B-Thinker, a 15-billion-parameter open-weights multimodal reasoning mannequin educated with a data-centric mid-training recipe—continuous pretraining adopted by supervised fine-tuning—with out reinforcement studying or choice optimization. The mannequin attains an Artificial Analysis Intelligence Index rating of 52 with 8x price financial savings in comparison with SOTA. The checkpoint ships beneath an MIT license on Hugging Face.

So, What’s new in it for me?

- Frontier-level composite rating at small scale. The mannequin experiences Artificial Analysis Intelligence Index (AAI) = 52, matching DeepSeek-R1-0528 on that mixed metric whereas being dramatically smaller. AAI aggregates 10 third-party evaluations (MMLU-Pro, GPQA Diamond, Humanity’s Last Exam, LiveCodeBench, SciCode, AIME 2025, IFBench, AA-LCR, Terminal-Bench Hard, τ²-Bench Telecom).

- Single-GPU deployability. The mannequin card states the 15B checkpoint “fits on a single GPU,” focusing on on-premises and air-gapped deployments with mounted reminiscence and latency budgets.

- Open weights and reproducible pipeline. Weights, coaching recipe, and analysis protocol are public for impartial verification.

Ok! I bought it however what’s it’s coaching mechanism?

Base and upscaling. Apriel-1.5-15B-Thinker begins from Mistral’s Pixtral-12B-Base-2409 multimodal decoder-vision stack. The analysis workforce applies depth upscaling—growing decoder layers from 40→48—then projection-network realignment to align the imaginative and prescient encoder with the enlarged decoder. This avoids pretraining from scratch whereas preserving single-GPU deployability.

CPT (Continual Pretraining). Two levels: (1) combined textual content+picture knowledge to construct foundational reasoning and doc/diagram understanding; (2) focused artificial visible duties (reconstruction, matching, detection, counting) to sharpen spatial and compositional reasoning. Sequence lengths lengthen to 32k and 16k tokens respectively, with selective loss placement on response tokens for instruction-formatted samples.

SFT (Supervised Fine-Tuning). High-quality, reasoning-trace instruction knowledge for math, coding, science, software use, and instruction following; two extra SFT runs (stratified subset; longer-context) are weight-merged to kind the ultimate checkpoint. No RL (reinforcement studying) or RLAIF (reinforcement studying from AI suggestions).

Data word. ~25% of the depth-upscaling textual content combine derives from NVIDIA’s Nemotron assortment.

O’ Wow! Tell me about it’s outcomes then?

Key textual content benchmarks (cross@1 / accuracy).

- AIME 2025 (American Invitational Mathematics Examination 2025): 87.5–88%

- GPQA Diamond (Graduate-Level Google-Proof Question Answering, Diamond cut up): ≈71%

- IFBench (Instruction-Following Benchmark): ~62

- τ²-Bench (Tau-squared Bench) Telecom: ~68

- LiveCodeBench (purposeful code correctness): ~72.8

Using VLMEvalKit for reproducibility, Apriel scores competitively throughout MMMU / MMMU-Pro (Massive Multi-discipline Multimodal Understanding), LogicVista, MathVision, MathVista, MathVerse, MMStar, CharXiv, AI2D, BLINK, with stronger outcomes on paperwork/diagrams and text-dominant math imagery.

Lets Summarize all the pieces

Apriel-1.5-15B-Thinker demonstrates that cautious mid-training (continuous pretraining + supervised fine-tuning, no reinforcement studying) can ship a 52 on the Artificial Analysis Intelligence Index (AAI) whereas remaining deployable on a single graphics processing unit. Reported task-level scores (for instance, AIME 2025 ≈88, GPQA Diamond ≈71, IFBench ≈62, Tau-squared Bench Telecom ≈68) align with the mannequin card and place the 15-billion-parameter checkpoint in probably the most cost-efficient band of present open-weights reasoners. For enterprises, that mixture—open weights, reproducible recipe, and single-GPU latency—makes Apriel a sensible baseline to judge earlier than contemplating bigger closed techniques.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Artificial Intelligence for social good. His most up-to-date endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that is each technically sound and simply comprehensible by a huge viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.