What can we find out about human intelligence by learning how machines “think?” Can we higher perceive ourselves if we higher perceive the synthetic intelligence methods which might be changing into a extra vital half of our on a regular basis lives?

These questions could also be deeply philosophical, however for Phillip Isola, discovering the solutions is as a lot about computation as it’s about cogitation.

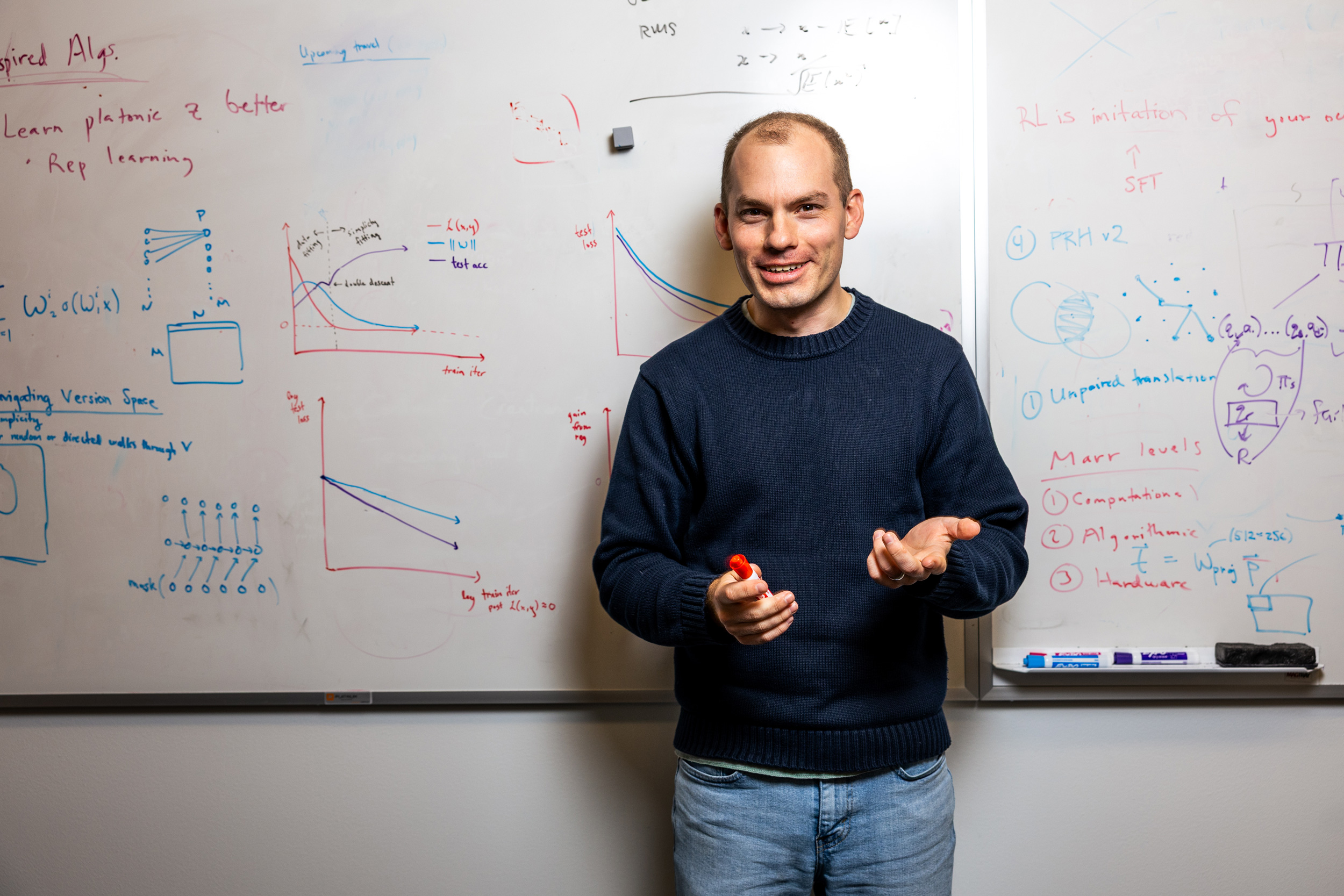

Isola, the newly tenured affiliate professor in the Department of Electrical Engineering and Computer Science (EECS), research the elementary mechanisms concerned in human-like intelligence from a computational perspective.

While understanding intelligence is the overarching purpose, his work focuses primarily on pc imaginative and prescient and machine studying. Isola is especially excited about exploring how intelligence emerges in AI fashions, how these fashions study to signify the world round them, and what their “brains” share with the brains of their human creators.

“I see all the different kinds of intelligence as having a lot of commonalities, and I’d like to understand those commonalities. What is it that all animals, humans, and AIs have in common?” says Isola, who can also be a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL).

To Isola, a greater scientific understanding of the intelligence that AI brokers possess will assist the world combine them safely and successfully into society, maximizing their potential to learn humanity.

Asking questions

Isola started pondering scientific questions at a younger age.

While rising up in San Francisco, he and his father incessantly went mountain climbing alongside the northern California shoreline or tenting round Point Reyes and in the hills of Marin County.

He was fascinated by geological processes and infrequently questioned what made the pure world work. In college, Isola was pushed by an insatiable curiosity, and whereas he gravitated towards technical topics like math and science, there was no restrict to what he wished to study.

Not solely certain what to review as an undergraduate at Yale University, Isola dabbled till he came across cognitive sciences.

“My earlier interest had been with nature — how the world works. But then I realized that the brain was even more interesting, and more complex than even the formation of the planets. Now, I wanted to know what makes us tick,” he says.

As a first-year pupil, he began working in the lab of his cognitive sciences professor and soon-to-be mentor, Brian Scholl, a member of the Yale Department of Psychology. He remained in that lab all through his time as an undergraduate.

After spending a niche 12 months working with some childhood associates at an indie online game firm, Isola was able to dive again into the advanced world of the human mind. He enrolled in the graduate program in mind and cognitive sciences at MIT.

“Grad school was where I felt like I finally found my place. I had a lot of great experiences at Yale and in other phases of my life, but when I got to MIT, I realized this was the work I really loved and these are the people who think similarly to me,” he says.

Isola credit his PhD advisor, Ted Adelson, the John and Dorothy Wilson Professor of Vision Science, as a significant affect on his future path. He was impressed by Adelson’s deal with understanding elementary rules, moderately than solely chasing new engineering benchmarks, that are formalized assessments used to measure the efficiency of a system.

A computational perspective

At MIT, Isola’s analysis drifted towards pc science and synthetic intelligence.

“I still loved all those questions from cognitive sciences, but I felt I could make more progress on some of those questions if I came at it from a purely computational perspective,” he says.

His thesis was targeted on perceptual grouping, which includes the mechanisms individuals and machines use to prepare discrete elements of a picture as a single, coherent object.

If machines can study perceptual groupings on their very own, that would allow AI methods to acknowledge objects with out human intervention. This kind of self-supervised studying has purposes in areas such autonomous autos, medical imaging, robotics, and computerized language translation.

After graduating from MIT, Isola accomplished a postdoc at the University of California at Berkeley so he may broaden his views by working in a lab solely targeted on pc science.

“That experience helped my work become a lot more impactful because I learned to balance understanding fundamental, abstract principles of intelligence with the pursuit of some more concrete benchmarks,” Isola remembers.

At Berkeley, he developed image-to-image translation frameworks, an early kind of generative AI mannequin that would flip a sketch right into a photographic picture, as an example, or flip a black-and-white photograph right into a coloration one.

He entered the tutorial job market and accepted a school place at MIT, however Isola deferred for a 12 months to work at a then-small startup referred to as OpenAI.

“It was a nonprofit, and I liked the idealistic mission at that time. They were really good at reinforcement learning, and I thought that seemed like an important topic to learn more about,” he says.

He loved working in a lab with a lot scientific freedom, however after a 12 months Isola was able to return to MIT and begin his personal analysis group.

Studying human-like intelligence

Running a analysis lab immediately appealed to him.

“I really love the early stage of an idea. I feel like I am a sort of startup incubator where I am constantly able to do new things and learn new things,” he says.

Building on his curiosity in cognitive sciences and need to grasp the human mind, his group research the elementary computations concerned in the human-like intelligence that emerges in machines.

One main focus is illustration studying, or the capacity of people and machines to signify and understand the sensory world round them.

In current work, he and his collaborators noticed that the many different sorts of machine-learning fashions, from LLMs to pc imaginative and prescient fashions to audio fashions, appear to signify the world in comparable methods.

These fashions are designed to do vastly completely different duties, however there are a lot of similarities of their architectures. And as they get greater and are skilled on extra information, their inner buildings turn into extra alike.

This led Isola and his crew to introduce the Platonic Representation Hypothesis (drawing its title from the Greek thinker Plato) which says that the representations all these fashions study are converging towards a shared, underlying illustration of actuality.

“Language, images, sound — all of these are different shadows on the wall from which you can infer that there is some kind of underlying physical process — some kind of causal reality — out there. If you train models on all these different types of data, they should converge on that world model in the end,” Isola says.

A associated space his crew research is self-supervised studying. This includes the methods through which AI fashions study to group associated pixels in a picture or phrases in a sentence with out having labeled examples to study from.

Because information are costly and labels are restricted, utilizing solely labeled information to coach fashions may maintain again the capabilities of AI methods. With self-supervised studying, the purpose is to develop fashions that may give you an correct inner illustration of the world on their very own.

“If you can come up with a good representation of the world, that should make subsequent problem solving easier,” he explains.

The focus of Isola’s analysis is extra about discovering one thing new and shocking than about constructing advanced methods that may outdo the newest machine-learning benchmarks.

While this strategy has yielded a lot success in uncovering modern strategies and architectures, it means the work typically lacks a concrete finish purpose, which might result in challenges.

For occasion, protecting a crew aligned and the funding flowing might be tough when the lab is targeted on trying to find sudden outcomes, he says.

“In a sense, we are always working in the dark. It is high-risk and high-reward work. Every once in while, we find some kernel of truth that is new and surprising,” he says.

In addition to pursuing data, Isola is keen about imparting data to the subsequent era of scientists and engineers. Among his favourite programs to show is 6.7960 (Deep Learning), which he and a number of other different MIT college members launched 4 years in the past.

The class has seen exponential progress, from 30 college students in its preliminary providing to greater than 700 this fall.

And whereas the reputation of AI means there isn’t a scarcity of college students, the velocity at which the discipline strikes could make it tough to separate the hype from really vital advances.

“I tell the students they have to take everything we say in the class with a grain of salt. Maybe in a few years we’ll tell them something different. We are really on the edge of knowledge with this course,” he says.

But Isola additionally emphasizes to college students that, for all the hype surrounding the newest AI fashions, clever machines are far less complicated than most individuals suspect.

“Human ingenuity, creativity, and emotions — many people believe these can never be modeled. That might turn out to be true, but I think intelligence is fairly simple once we understand it,” he says.

Even although his present work focuses on deep-learning fashions, Isola remains to be fascinated by the complexity of the human mind and continues to collaborate with researchers who research cognitive sciences.

All the whereas, he has remained captivated by the magnificence of the pure world that impressed his first curiosity in science.

Although he has much less time for hobbies nowadays, Isola enjoys mountain climbing and backpacking in the mountains or on Cape Cod, snowboarding and kayaking, or discovering scenic locations to spend time when he travels for scientific conferences.

And whereas he seems ahead to exploring new questions in his lab at MIT, Isola can’t assist however ponder how the function of clever machines would possibly change the course of his work.

He believes that synthetic common intelligence (AGI), or the level the place machines can study and apply their data in addition to people can, isn’t that far off.

“I don’t think AIs will just do everything for us and we’ll go and enjoy life at the beach. I think there is going to be this coexistence between smart machines and humans who still have a lot of agency and control. Now, I’m thinking about the interesting questions and applications once that happens. How can I help the world in this post-AGI future? I don’t have any answers yet, but it’s on my mind,” he says.