Screen consumer interfaces (UIs) and infographics, comparable to charts, diagrams and tables, play essential roles in human communication and human-machine interplay as they facilitate wealthy and interactive consumer experiences. UIs and infographics share comparable design ideas and visual language (e.g., icons and layouts), that supply a chance to construct a single model that may perceive, cause, and work together with these interfaces. However, due to their complexity and various presentation codecs, infographics and UIs current a novel modeling problem.

To that finish, we introduce “ScreenAI: A Vision-Language Model for UI and Infographics Understanding”. ScreenAI improves upon the PaLI structure with the versatile patching technique from pix2struct. We practice ScreenAI on a novel combination of datasets and duties, together with a novel Screen Annotation job that requires the model to establish UI aspect info (i.e., sort, location and description) on a display. These textual content annotations present massive language fashions (LLMs) with display descriptions, enabling them to robotically generate question-answering (QA), UI navigation, and summarization coaching datasets at scale. At solely 5B parameters, ScreenAI achieves state-of-the-art outcomes on UI- and infographic-based duties (WebSRC and MoTIF), and best-in-class efficiency on Chart QA, DocVQA, and InfographicVQA in comparison with fashions of comparable dimension. We are additionally releasing three new datasets: Screen Annotation to judge the format understanding functionality of the model, in addition to ScreenQA Short and Complex ScreenQA for a extra complete analysis of its QA functionality.

ScreenAI

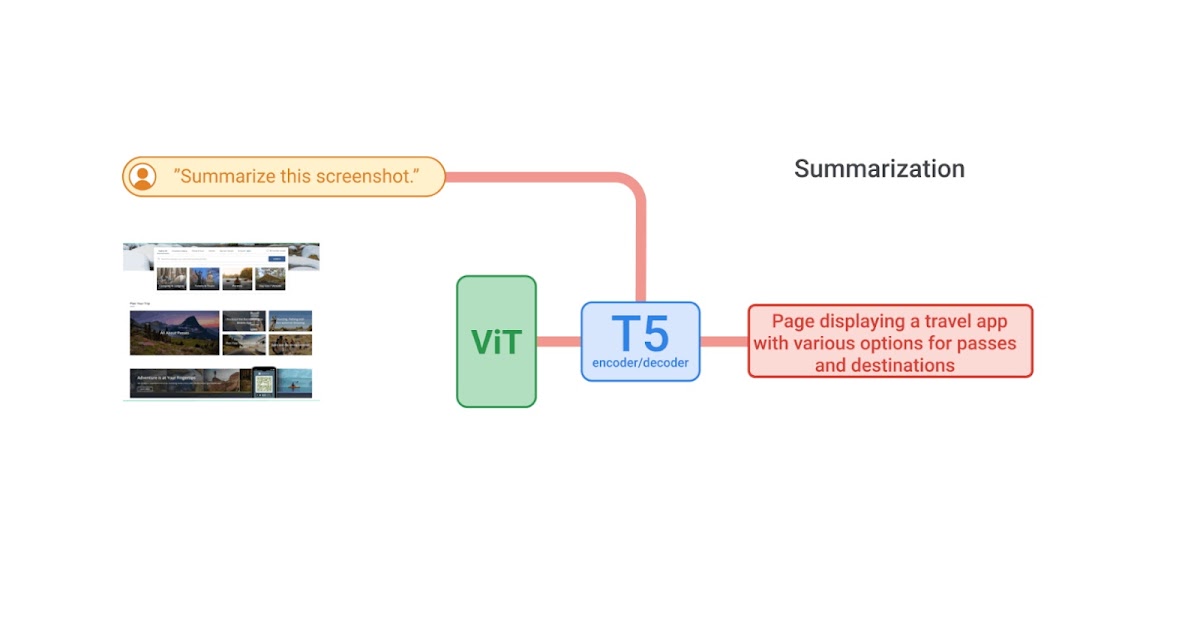

ScreenAI’s structure is predicated on PaLI, composed of a multimodal encoder block and an autoregressive decoder. The PaLI encoder makes use of a imaginative and prescient transformer (ViT) that creates picture embeddings and a multimodal encoder that takes the concatenation of the picture and textual content embeddings as enter. This versatile structure permits ScreenAI to unravel imaginative and prescient duties that may be recast as textual content+image-to-text issues.

On high of the PaLI structure, we make use of a versatile patching technique launched in pix2struct. Instead of utilizing a fixed-grid sample, the grid dimensions are chosen such that they protect the native side ratio of the enter picture. This permits ScreenAI to work effectively throughout pictures of varied side ratios.

The ScreenAI model is skilled in two levels: a pre-training stage adopted by a fine-tuning stage. First, self-supervised studying is utilized to robotically generate knowledge labels, that are then used to coach ViT and the language model. ViT is frozen throughout the fine-tuning stage, the place most knowledge used is manually labeled by human raters.

|

| ScreenAI model structure. |

Data era

To create a pre-training dataset for ScreenAI, we first compile an intensive assortment of screenshots from varied units, together with desktops, cell, and tablets. This is achieved through the use of publicly accessible net pages and following the programmatic exploration strategy used for the RICO dataset for cell apps. We then apply a format annotator, primarily based on the DETR model, that identifies and labels a variety of UI parts (e.g., picture, pictogram, button, textual content) and their spatial relationships. Pictograms bear additional evaluation utilizing an icon classifier able to distinguishing 77 totally different icon varieties. This detailed classification is important for decoding the refined info conveyed by icons. For icons that aren’t lined by the classifier, and for infographics and pictures, we use the PaLI picture captioning model to generate descriptive captions that present contextual info. We additionally apply an optical character recognition (OCR) engine to extract and annotate textual content material on display. We mix the OCR textual content with the earlier annotations to create an in depth description of every display.

|

A cell app screenshot with generated annotations that embrace UI parts and their descriptions, e.g., TEXT parts additionally include the textual content content material from OCR, IMAGE parts include picture captions, LIST_ITEMs include all their youngster parts. |

LLM-based knowledge era

We improve the pre-training knowledge’s variety utilizing PaLM 2 to generate input-output pairs in a two-step course of. First, display annotations are generated utilizing the approach outlined above, then we craft a immediate round this schema for the LLM to create artificial knowledge. This course of requires immediate engineering and iterative refinement to seek out an efficient immediate. We assess the generated knowledge’s high quality by human validation towards a high quality threshold.

You solely communicate JSON. Do not write textual content that isn’t JSON.

You are given the next cell screenshot, described in phrases. Can you generate 5 questions relating to the content material of the screenshot in addition to the corresponding brief solutions to them?

The reply ought to be as brief as potential, containing solely the required info. Your reply ought to be structured as follows:

questions: [

{{question: the question,

answer: the answer

}},

...

]

{THE SCREEN SCHEMA}

| A pattern immediate for QA knowledge era. |

By combining the pure language capabilities of LLMs with a structured schema, we simulate a variety of consumer interactions and situations to generate artificial, real looking duties. In specific, we generate three classes of duties:

- Question answering: The model is requested to reply questions relating to the content material of the screenshots, e.g., “When does the restaurant open?”

- Screen navigation: The model is requested to transform a pure language utterance into an executable motion on a display, e.g., “Click the search button.”

- Screen summarization: The model is requested to summarize the display content material in a single or two sentences.

|

| Block diagram of our workflow for producing knowledge for QA, summarization and navigation duties utilizing present ScreenAI fashions and LLMs. Each job makes use of a customized immediate to emphasise desired points, like questions associated to counting, involving reasoning, and so forth. |

| LLM-generated knowledge. Examples for display QA, navigation and summarization. For navigation, the motion bounding field is displayed in pink on the screenshot. |

Experiments and outcomes

As beforehand talked about, ScreenAI is skilled in two levels: pre-training and fine-tuning. Pre-training knowledge labels are obtained utilizing self-supervised studying and fine-tuning knowledge labels comes from human raters.

We fine-tune ScreenAI utilizing public QA, summarization, and navigation datasets and quite a lot of duties associated to UIs. For QA, we use effectively established benchmarks within the multimodal and doc understanding discipline, comparable to ChartQA, DocVQA, Multi web page DocVQA, InfographicVQA, OCR VQA, Web SRC and ScreenQA. For navigation, datasets used embrace Referring Expressions, MoTIF, Mug, and Android within the Wild. Finally, we use Screen2Words for display summarization and Widget Captioning for describing particular UI parts. Along with the fine-tuning datasets, we consider the fine-tuned ScreenAI model utilizing three novel benchmarks:

- Screen Annotation: Enables the analysis model format annotations and spatial understanding capabilities.

- ScreenQA Short: A variation of ScreenQA, the place its floor reality solutions have been shortened to include solely the related info that higher aligns with different QA duties.

- Complex ScreenQA: Complements ScreenQA Short with tougher questions (counting, arithmetic, comparability, and non-answerable questions) and comprises screens with varied side ratios.

The fine-tuned ScreenAI model achieves state-of-the-art outcomes on varied UI and infographic-based duties (WebSRC and MoTIF) and best-in-class efficiency on Chart QA, DocVQA, and InfographicVQA in comparison with fashions of comparable dimension. ScreenAI achieves aggressive efficiency on Screen2Words and OCR-VQA. Additionally, we report outcomes on the brand new benchmark datasets launched to function a baseline for additional analysis.

|

| Comparing model efficiency of ScreenAI with state-of-the-art (SOTA) fashions of comparable dimension. |

Next, we study ScreenAI’s scaling capabilities and observe that throughout all duties, rising the model dimension improves performances and the enhancements haven’t saturated on the largest dimension.

|

| Model efficiency will increase with dimension, and the efficiency has not saturated even on the largest dimension of 5B params. |

Conclusion

We introduce the ScreenAI model together with a unified illustration that permits us to develop self-supervised studying duties leveraging knowledge from all these domains. We additionally illustrate the influence of knowledge era utilizing LLMs and examine bettering model efficiency on particular points with modifying the coaching combination. We apply all of those strategies to construct multi-task skilled fashions that carry out competitively with state-of-the-art approaches on a lot of public benchmarks. However, we additionally notice that our strategy nonetheless lags behind massive fashions and additional analysis is required to bridge this hole.

Acknowledgements

This venture is the results of joint work with Maria Wang, Fedir Zubach, Hassan Mansoor, Vincent Etter, Victor Carbune, Jason Lin, Jindong Chen and Abhanshu Sharma. We thank Fangyu Liu, Xi Chen, Efi Kokiopoulou, Jesse Berent, Gabriel Barcik, Lukas Zilka, Oriana Riva, Gang Li,Yang Li, Radu Soricut, and Tania Bedrax-Weiss for their insightful suggestions and discussions, together with Rahul Aralikatte, Hao Cheng and Daniel Kim for their help in knowledge preparation. We additionally thank Jay Yagnik, Blaise Aguera y Arcas, Ewa Dominowska, David Petrou, and Matt Sharifi for their management, imaginative and prescient and help. We are very grateful toTom Small for serving to us create the animation on this put up.