The strategy of discovering molecules which have the properties wanted to create new medicines and supplies is cumbersome and costly, consuming huge computational assets and months of human labor to slim down the big area of potential candidates.

Large language fashions (LLMs) like ChatGPT might streamline this course of, however enabling an LLM to grasp and motive in regards to the atoms and bonds that type a molecule, the identical approach it does with phrases that type sentences, has offered a scientific stumbling block.

Researchers from MIT and the MIT-IBM Watson AI Lab created a promising strategy that augments an LLM with different machine-learning fashions often called graph-based fashions, that are particularly designed for producing and predicting molecular buildings.

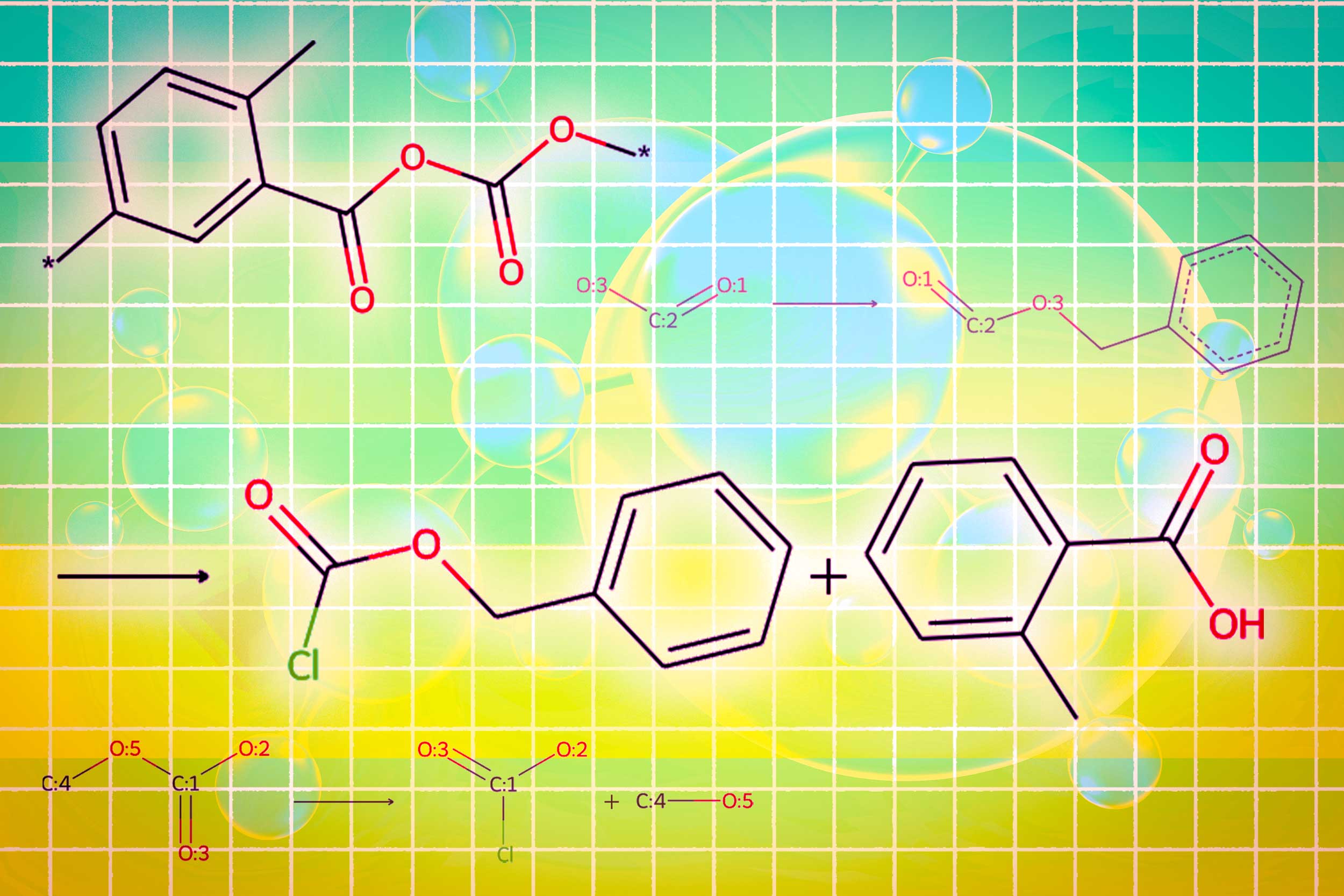

Their methodology employs a base LLM to interpret pure language queries specifying desired molecular properties. It mechanically switches between the bottom LLM and graph-based AI modules to design the molecule, clarify the rationale, and generate a step-by-step plan to synthesize it. It interleaves textual content, graph, and synthesis step era, combining phrases, graphs, and reactions into a standard vocabulary for the LLM to devour.

When in comparison with present LLM-based approaches, this multimodal method generated molecules that higher matched consumer specs and have been extra prone to have a sound synthesis plan, bettering the success ratio from 5 p.c to 35 p.c.

It additionally outperformed LLMs which might be greater than 10 occasions its dimension and that design molecules and synthesis routes solely with text-based representations, suggesting multimodality is vital to the brand new system’s success.

“This could hopefully be an end-to-end solution where, from start to finish, we would automate the entire process of designing and making a molecule. If an LLM could just give you the answer in a few seconds, it would be a huge time-saver for pharmaceutical companies,” says Michael Sun, an MIT graduate pupil and co-author of a paper on this system.

Sun’s co-authors embrace lead writer Gang Liu, a graduate pupil on the University of Notre Dame; Wojciech Matusik, a professor {of electrical} engineering and laptop science at MIT who leads the Computational Design and Fabrication Group throughout the Computer Science and Artificial Intelligence Laboratory (CSAIL); Meng Jiang, affiliate professor on the University of Notre Dame; and senior writer Jie Chen, a senior analysis scientist and supervisor within the MIT-IBM Watson AI Lab. The analysis can be offered on the International Conference on Learning Representations.

Best of each worlds

Large language fashions aren’t constructed to grasp the nuances of chemistry, which is one motive they battle with inverse molecular design, a strategy of figuring out molecular buildings which have sure features or properties.

LLMs convert textual content into representations known as tokens, which they use to sequentially predict the next phrase in a sentence. But molecules are “graph structures,” composed of atoms and bonds with no explicit ordering, making them troublesome to encode as sequential textual content.

On the opposite hand, highly effective graph-based AI fashions characterize atoms and molecular bonds as interconnected nodes and edges in a graph. While these fashions are fashionable for inverse molecular design, they require advanced inputs, can’t perceive pure language, and yield outcomes that may be troublesome to interpret.

The MIT researchers mixed an LLM with graph-based AI fashions right into a unified framework that will get one of the best of each worlds.

Llamole, which stands for big language mannequin for molecular discovery, makes use of a base LLM as a gatekeeper to grasp a consumer’s question — a plain-language request for a molecule with sure properties.

For occasion, maybe a consumer seeks a molecule that may penetrate the blood-brain barrier and inhibit HIV, provided that it has a molecular weight of 209 and sure bond traits.

As the LLM predicts textual content in response to the question, it switches between graph modules.

One module makes use of a graph diffusion mannequin to generate the molecular construction conditioned on enter necessities. A second module makes use of a graph neural community to encode the generated molecular construction again into tokens for the LLMs to devour. The remaining graph module is a graph response predictor which takes as enter an intermediate molecular construction and predicts a response step, trying to find the precise set of steps to make the molecule from fundamental constructing blocks.

The researchers created a brand new sort of set off token that tells the LLM when to activate every module. When the LLM predicts a “design” set off token, it switches to the module that sketches a molecular construction, and when it predicts a “retro” set off token, it switches to the retrosynthetic planning module that predicts the next response step.

“The beauty of this is that everything the LLM generates before activating a particular module gets fed into that module itself. The module is learning to operate in a way that is consistent with what came before,” Sun says.

In the identical method, the output of every module is encoded and fed again into the era strategy of the LLM, so it understands what every module did and will proceed predicting tokens primarily based on these knowledge.

Better, easier molecular buildings

In the top, Llamole outputs a picture of the molecular construction, a textual description of the molecule, and a step-by-step synthesis plan that gives the main points of methods to make it, right down to particular person chemical reactions.

In experiments involving designing molecules that matched consumer specs, Llamole outperformed 10 normal LLMs, 4 fine-tuned LLMs, and a state-of-the-art domain-specific methodology. At the identical time, it boosted the retrosynthetic planning success fee from 5 p.c to 35 p.c by producing molecules which might be higher-quality, which suggests that they had easier buildings and lower-cost constructing blocks.

“On their own, LLMs struggle to figure out how to synthesize molecules because it requires a lot of multistep planning. Our method can generate better molecular structures that are also easier to synthesize,” Liu says.

To prepare and consider Llamole, the researchers constructed two datasets from scratch since present datasets of molecular buildings didn’t comprise sufficient particulars. They augmented lots of of hundreds of patented molecules with AI-generated pure language descriptions and custom-made description templates.

The dataset they constructed to fine-tune the LLM consists of templates associated to 10 molecular properties, so one limitation of Llamole is that it’s skilled to design molecules contemplating solely these 10 numerical properties.

In future work, the researchers wish to generalize Llamole so it could possibly incorporate any molecular property. In addition, they plan to enhance the graph modules to spice up Llamole’s retrosynthesis success fee.

And in the long term, they hope to make use of this strategy to transcend molecules, creating multimodal LLMs that may deal with different varieties of graph-based knowledge, reminiscent of interconnected sensors in an influence grid or transactions in a monetary market.

“Llamole demonstrates the feasibility of using large language models as an interface to complex data beyond textual description, and we anticipate them to be a foundation that interacts with other AI algorithms to solve any graph problems,” says Chen.

This analysis is funded, partly, by the MIT-IBM Watson AI Lab, the National Science Foundation, and the Office of Naval Research.