The latest progress in generative AI unlocked the potential for creating new content material in a number of completely different domains, together with textual content, imaginative and prescient and audio. These fashions typically depend on the truth that uncooked knowledge is first transformed to a compressed format as a sequence of tokens. In the case of audio, neural audio codecs (e.g., SoundStream or EnCodec) can effectively compress waveforms to a compact illustration, which may be inverted to reconstruct an approximation of the unique audio sign. Such a illustration consists of a sequence of discrete audio tokens, capturing the native properties of sounds (e.g., phonemes) and their temporal construction (e.g., prosody). By representing audio as a sequence of discrete tokens, audio generation may be carried out with Transformer-based sequence-to-sequence fashions — this has unlocked fast progress in speech continuation (e.g., with AudioLM), text-to-speech (e.g., with SPEAR-TTS), and common audio and music generation (e.g., AudioGen and MusicLM). Many generative audio fashions, together with AudioLM, depend on auto-regressive decoding, which produces tokens one after the other. While this technique achieves excessive acoustic high quality, inference (i.e., calculating an output) may be sluggish, particularly when decoding lengthy sequences.

To tackle this challenge, in “SoundStorm: Efficient Parallel Audio Generation”, we suggest a brand new technique for environment friendly and high-quality audio generation. SoundStorm addresses the issue of producing lengthy audio token sequences by counting on two novel components: 1) an structure tailored to the precise nature of audio tokens as produced by the SoundStream neural codec, and a pair of) a decoding scheme impressed by MaskGIT, a just lately proposed technique for picture generation, which is tailor-made to function on audio tokens. Compared to the autoregressive decoding method of AudioLM, SoundStorm is ready to generate tokens in parallel, thus reducing the inference time by 100x for lengthy sequences, and produces audio of the identical high quality and with larger consistency in voice and acoustic situations. Moreover, we present that SoundStorm, coupled with the text-to-semantic modeling stage of SPEAR-TTS, can synthesize high-quality, pure dialogues, permitting one to regulate the spoken content material (by way of transcripts), speaker voices (by way of quick voice prompts) and speaker turns (by way of transcript annotations), as demonstrated by the examples beneath:

| Input: Text (transcript used to drive the audio generation in daring) | Something actually humorous occurred to me this morning. | Oh wow, what? | Well, uh I wakened as regular. | Uhhuh | Went downstairs to have uh breakfast. | Yeah | Started consuming. Then uh 10 minutes later I spotted it was the midnight. | Oh no approach, that is so humorous! | I did not sleep nicely final evening. | Oh, no. What occurred? | I do not know. I I simply could not appear to uh to go to sleep in some way, I stored tossing and turning all evening. | That’s too unhealthy. Maybe it’s best to uh attempt going to mattress earlier tonight or uh possibly you possibly can attempt studying a e book. | Yeah, thanks for the strategies, I hope you are proper. | No drawback. I I hope you get evening’s sleep | ||

| Input: Audio immediate |

|

|

||

| Output: Audio immediate + generated audio |

|

|

SoundStorm design

In our earlier work on AudioLM, we confirmed that audio generation may be decomposed into two steps: 1) semantic modeling, which generates semantic tokens from both earlier semantic tokens or a conditioning sign (e.g., a transcript as in SPEAR-TTS, or a textual content immediate as in MusicLM), and a pair of) acoustic modeling, which generates acoustic tokens from semantic tokens. With SoundStorm we particularly tackle this second, acoustic modeling step, changing slower autoregressive decoding with quicker parallel decoding.

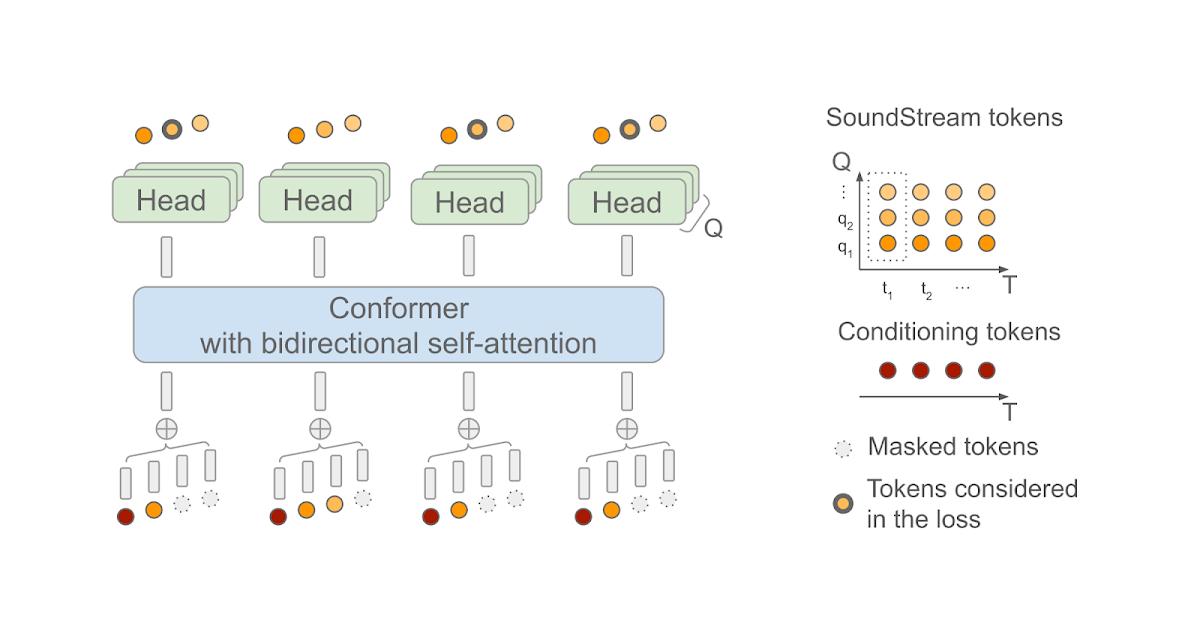

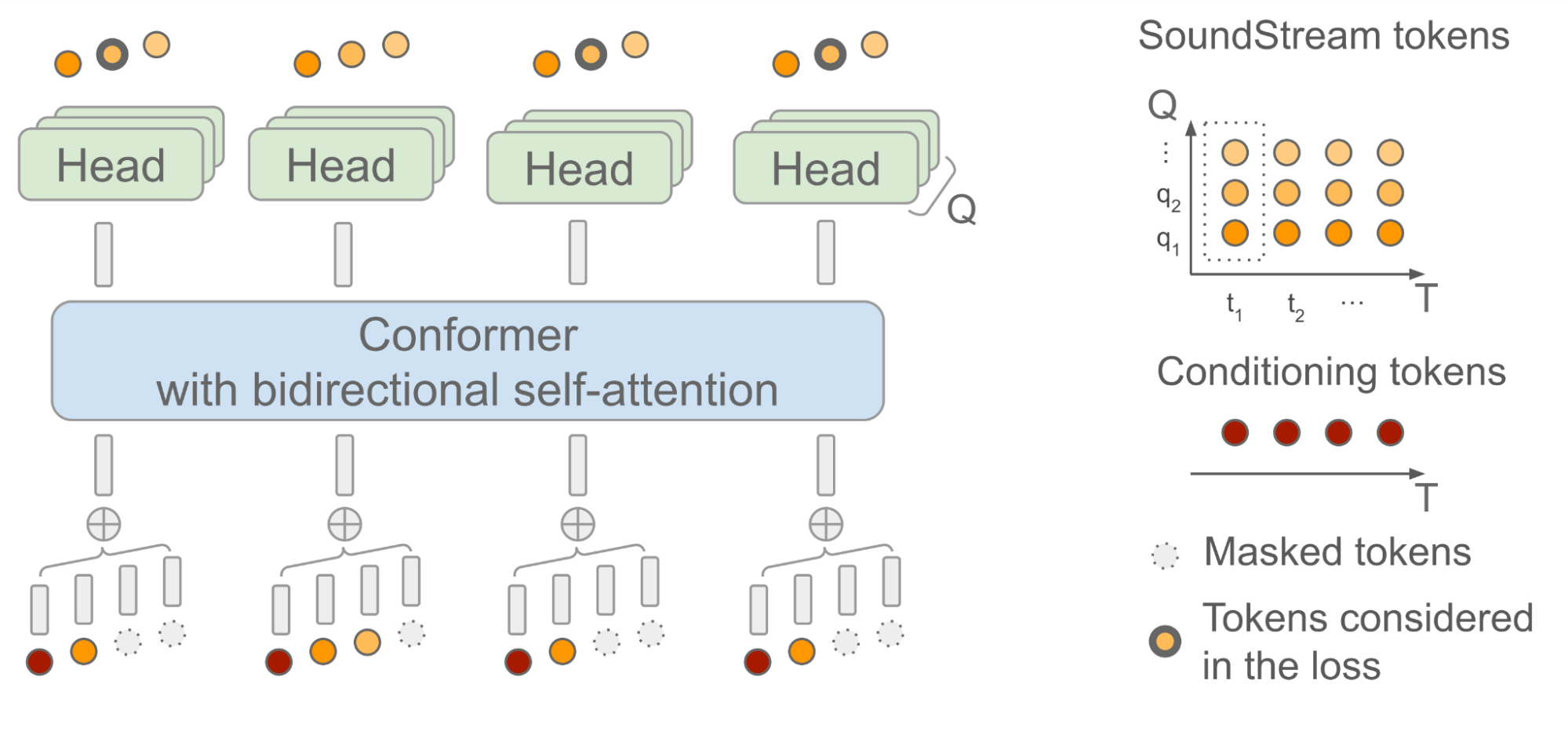

SoundStorm depends on a bidirectional attention-based Conformer, a mannequin structure that mixes a Transformer with convolutions to seize each native and world construction of a sequence of tokens. Specifically, the mannequin is skilled to foretell audio tokens produced by SoundStream given a sequence of semantic tokens generated by AudioLM as enter. When doing this, you will need to bear in mind the truth that, at every time step t, SoundStream makes use of as much as Q tokens to symbolize the audio utilizing a technique referred to as residual vector quantization (RVQ), as illustrated beneath on the appropriate. The key instinct is that the standard of the reconstructed audio progressively will increase because the variety of generated tokens at every step goes from 1 to Q.

At inference time, given the semantic tokens as enter conditioning sign, SoundStorm begins with all audio tokens masked out, and fills within the masked tokens over a number of iterations, ranging from the coarse tokens at RVQ stage q = 1 and continuing level-by-level with finer tokens till reaching stage q = Q.

There are two essential points of SoundStorm that allow quick generation: 1) tokens are predicted in parallel throughout a single iteration inside a RVQ stage and, 2) the mannequin structure is designed in such a approach that the complexity is just mildly affected by the variety of ranges Q. To assist this inference scheme, throughout coaching a rigorously designed masking scheme is used to imitate the iterative course of used at inference.

|

| SoundStorm mannequin structure. T denotes the variety of time steps and Q the variety of RVQ ranges utilized by SoundStream. The semantic tokens used as conditioning are time-aligned with the SoundStream frames. |

Measuring SoundStorm efficiency

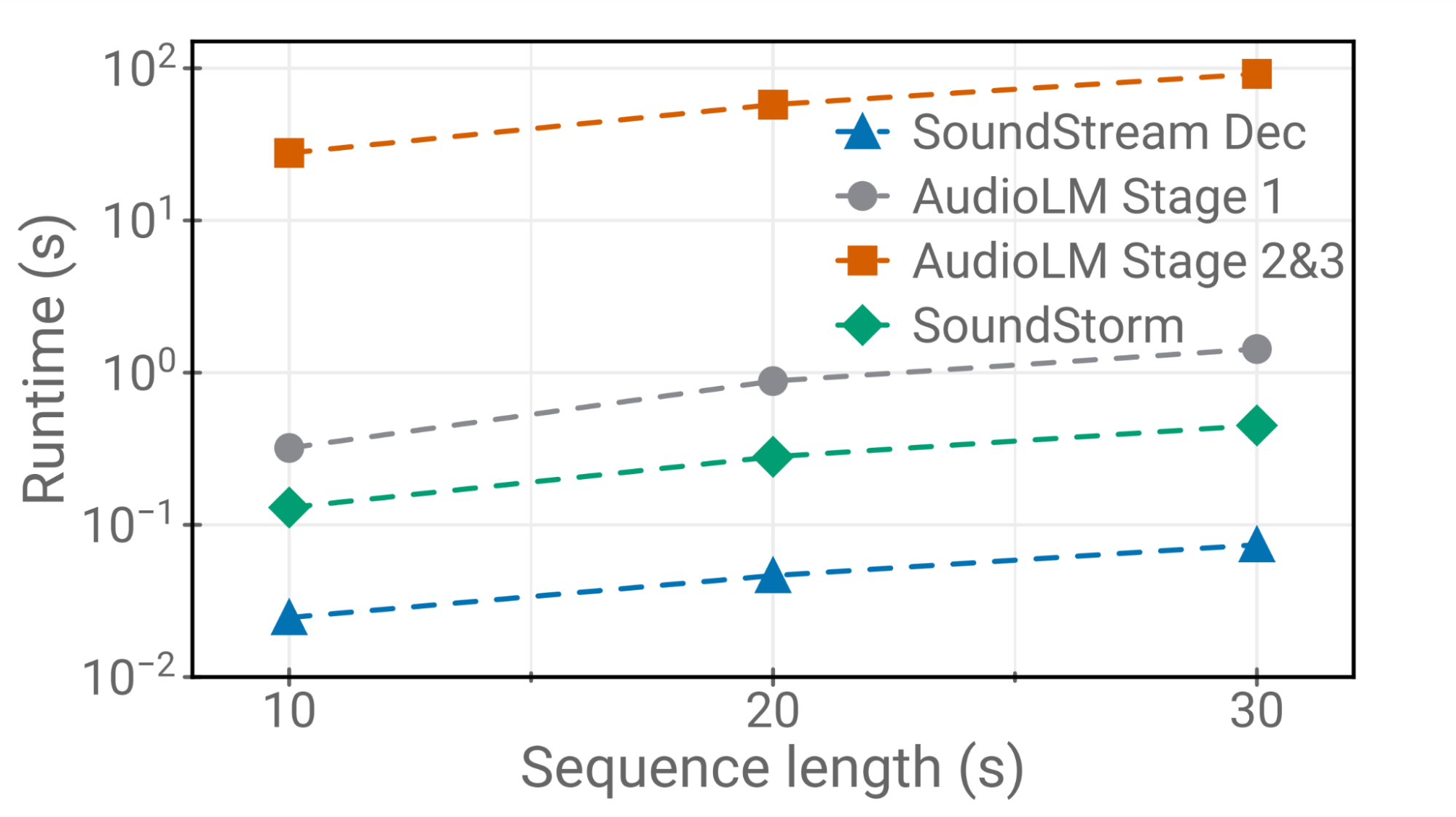

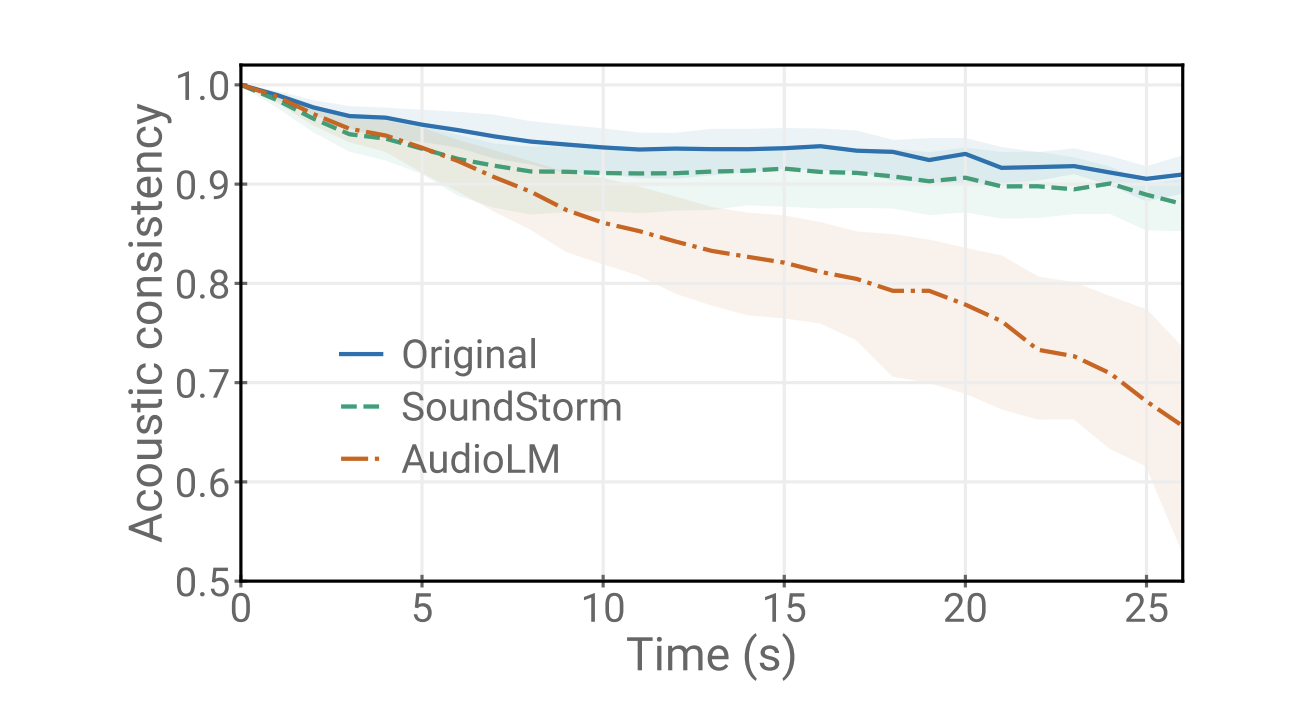

We reveal that SoundStorm matches the standard of AudioLM’s acoustic generator, changing each AudioLM’s stage two (coarse acoustic mannequin) and stage three (wonderful acoustic mannequin). Furthermore, SoundStorm produces audio 100x quicker than AudioLM’s hierarchical autoregressive acoustic generator (prime half beneath) with matching high quality and improved consistency when it comes to speaker identification and acoustic situations (backside half beneath).

|

| Runtimes of SoundStream decoding, SoundStorm and completely different phases of AudioLM on a TPU-v4. |

|

| Acoustic consistency between the immediate and the generated audio. The shaded space represents the inter-quartile vary. |

Safety and threat mitigation

We acknowledge that the audio samples produced by the mannequin could also be influenced by the unfair biases current within the coaching knowledge, as an illustration when it comes to represented accents and voice traits. In our generated samples, we reveal that we will reliably and responsibly management speaker traits by way of prompting, with the aim of avoiding unfair biases. A radical evaluation of any coaching knowledge and its limitations is an space of future work in keeping with our accountable AI Principles.

In flip, the power to imitate a voice can have quite a few malicious purposes, together with bypassing biometric identification and utilizing the mannequin for the aim of impersonation. Thus, it’s essential to place in place safeguards towards potential misuse: to this finish, we’ve got verified that the audio generated by SoundStorm stays detectable by a devoted classifier utilizing the identical classifier as described in our authentic AudioLM paper. Hence, as a element of a bigger system, we consider that SoundStorm can be unlikely to introduce extra dangers to these mentioned in our earlier papers on AudioLM and SPEAR-TTS. At the identical time, stress-free the reminiscence and computational necessities of AudioLM would make analysis within the area of audio generation extra accessible to a wider neighborhood. In the longer term, we plan to discover different approaches for detecting synthesized speech, e.g., with the assistance of audio watermarking, in order that any potential product utilization of this know-how strictly follows our accountable AI Principles.

Conclusion

We have launched SoundStorm, a mannequin that may effectively synthesize high-quality audio from discrete conditioning tokens. When in comparison with the acoustic generator of AudioLM, SoundStorm is 2 orders of magnitude quicker and achieves larger temporal consistency when producing lengthy audio samples. By combining a text-to-semantic token mannequin just like SPEAR-TTS with SoundStorm, we will scale text-to-speech synthesis to longer contexts and generate pure dialogues with a number of speaker turns, controlling each the voices of the audio system and the generated content material. SoundStorm will not be restricted to producing speech. For instance, MusicLM makes use of SoundStorm to synthesize longer outputs effectively (as seen at I/O).

Acknowledgments

The work described right here was authored by Zalán Borsos, Matt Sharifi, Damien Vincent, Eugene Kharitonov, Neil Zeghidour and Marco Tagliasacchi. We are grateful for all discussions and suggestions on this work that we obtained from our colleagues at Google.