Previously, we offered the 1,000 languages initiative and the Universal Speech Model with the objective of creating speech and language applied sciences accessible to billions of customers world wide. Part of this dedication includes growing high-quality speech synthesis applied sciences, which construct upon tasks reminiscent of VDTTS and AudioLM, for customers that talk many totally different languages.

|

After growing a brand new mannequin, one should consider whether or not the speech it generates is correct and pure: the content material should be related to the duty, the pronunciation appropriate, the tone applicable, and there needs to be no acoustic artifacts reminiscent of cracks or signal-correlated noise. Such analysis is a significant bottleneck in the event of multilingual speech programs.

The hottest technique to guage the standard of speech synthesis fashions is human analysis: a text-to-speech (TTS) engineer produces a couple of thousand utterances from the most recent mannequin, sends them for human analysis, and receives outcomes a couple of days later. This analysis section sometimes includes listening assessments, throughout which dozens of annotators take heed to the utterances one after the opposite to find out how pure they sound. While people are nonetheless unbeaten at detecting whether or not a bit of textual content sounds pure, this course of could be impractical — particularly in the early phases of analysis tasks, when engineers want speedy suggestions to check and restrategize their strategy. Human analysis is pricey, time consuming, and could also be restricted by the supply of raters for the languages of curiosity.

Another barrier to progress is that totally different tasks and establishments sometimes use numerous scores, platforms and protocols, which makes apples-to-apples comparisons unimaginable. In this regard, speech synthesis applied sciences lag behind textual content era, the place researchers have lengthy complemented human analysis with automated metrics reminiscent of BLEU or, extra just lately, BLEURT.

In “SQuId: Measuring Speech Naturalness in Many Languages”, to be offered at ICASSP 2023, we introduce SQuId (Speech Quality Identification), a 600M parameter regression mannequin that describes to what extent a bit of speech sounds pure. SQuId is predicated on mSLAM (a pre-trained speech-text mannequin developed by Google), fine-tuned on over one million high quality scores throughout 42 languages and examined in 65. We reveal how SQuId can be utilized to enhance human scores for analysis of many languages. This is the biggest revealed effort of this sort up to now.

Evaluating TTS with SQuId

The fundamental speculation behind SQuId is that coaching a regression mannequin on beforehand collected scores can present us with a low-cost technique for assessing the standard of a TTS mannequin. The mannequin can due to this fact be a beneficial addition to a TTS researcher’s analysis toolbox, offering a near-instant, albeit much less correct various to human analysis.

SQuId takes an utterance as enter and an optionally available locale tag (i.e., a localized variant of a language, reminiscent of “Brazilian Portuguese” or “British English”). It returns a rating between 1 and 5 that signifies how pure the waveform sounds, with a better worth indicating a extra pure waveform.

Internally, the mannequin consists of three elements: (1) an encoder, (2) a pooling / regression layer, and (3) a totally related layer. First, the encoder takes a spectrogram as enter and embeds it right into a smaller 2D matrix that incorporates 3,200 vectors of dimension 1,024, the place every vector encodes a time step. The pooling / regression layer aggregates the vectors, appends the locale tag, and feeds the consequence into a totally related layer that returns a rating. Finally, we apply application-specific post-processing that rescales or normalizes the rating so it’s inside the [1, 5] vary, which is widespread for naturalness human scores. We prepare the entire mannequin end-to-end with a regression loss.

The encoder is by far the biggest and most vital piece of the mannequin. We used mSLAM, a pre-existing 600M-parameter Conformer pre-trained on each speech (51 languages) and textual content (101 languages).

|

| The SQuId mannequin. |

To prepare and consider the mannequin, we created the SQuId corpus: a set of 1.9 million rated utterances throughout 66 languages, collected for over 2,000 analysis and product TTS tasks. The SQuId corpus covers a various array of programs, together with concatenative and neural fashions, for a broad vary of use circumstances, reminiscent of driving instructions and digital assistants. Manual inspection reveals that SQuId is uncovered to an enormous vary of of TTS errors, reminiscent of acoustic artifacts (e.g., cracks and pops), incorrect prosody (e.g., questions with out rising intonations in English), textual content normalization errors (e.g., verbalizing “7/7” as “seven divided by seven” quite than “July seventh”), or pronunciation errors (e.g., verbalizing “powerful” as “toe”).

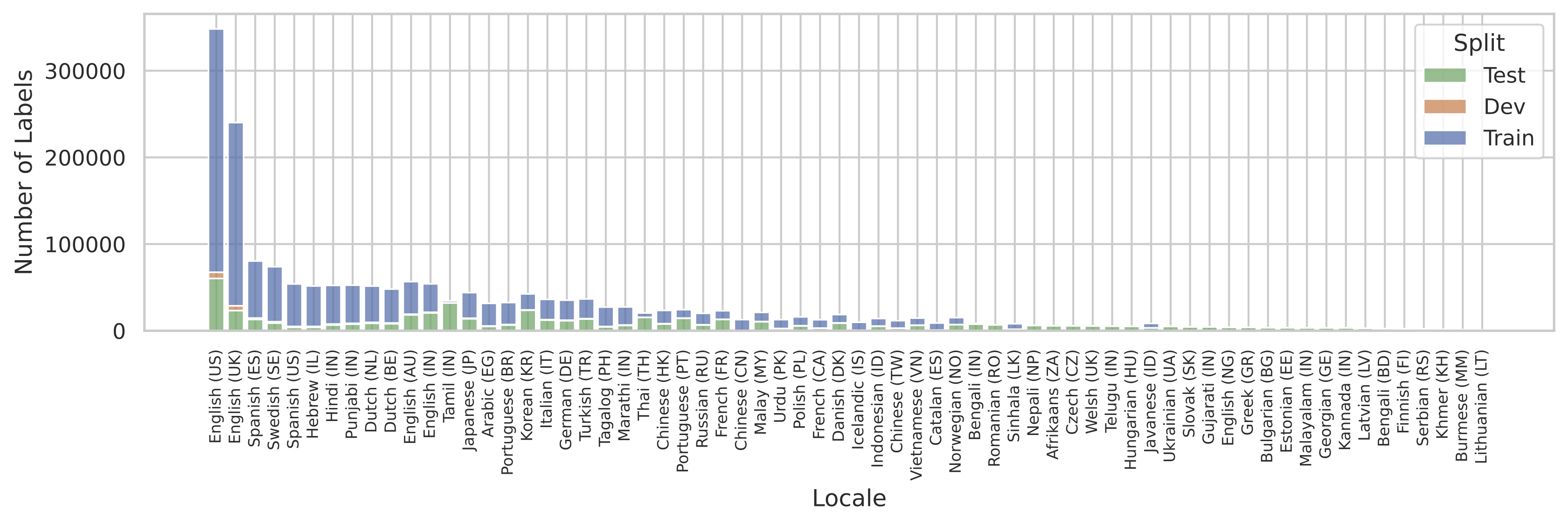

A standard difficulty that arises when coaching multilingual programs is that the coaching information might not be uniformly accessible for all of the languages of curiosity. SQuId was no exception. The following determine illustrates the scale of the corpus for every locale. We see that the distribution is basically dominated by US English.

|

| Locale distribution in the SQuId dataset. |

How can we offer good efficiency for all languages when there are such variations? Inspired by earlier work on machine translation, in addition to previous work from the speech literature, we determined to coach one mannequin for all languages, quite than utilizing separate fashions for every language. The speculation is that if the mannequin is massive sufficient, then cross-locale switch can happen: the mannequin’s accuracy on every locale improves because of collectively coaching on the others. As our experiments present, cross-locale proves to be a strong driver of efficiency.

Experimental outcomes

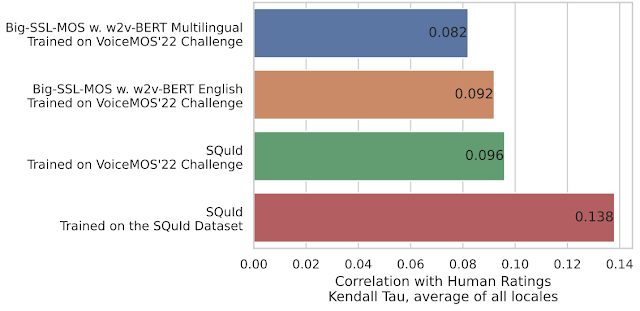

To perceive SQuId’s general efficiency, we evaluate it to a customized Big-SSL-MOS mannequin (described in the paper), a aggressive baseline impressed by MOS-SSL, a state-of-the-art TTS analysis system. Big-SSL-MOS is predicated on w2v-BERT and was skilled on the VoiceMOS’22 Challenge dataset, the most well-liked dataset on the time of analysis. We experimented with a number of variants of the mannequin, and located that SQuId is as much as 50.0% extra correct.

|

| SQuId versus state-of-the-art baselines. We measure settlement with human scores utilizing the Kendall Tau, the place a better worth represents higher accuracy. |

To perceive the influence of cross-locale switch, we run a sequence of ablation research. We range the quantity of locales launched in the coaching set and measure the impact on SQuId’s accuracy. In English, which is already over-represented in the dataset, the impact of including locales is negligible.

|

| SQuId’s efficiency on US English, utilizing 1, 8, and 42 locales throughout fine-tuning. |

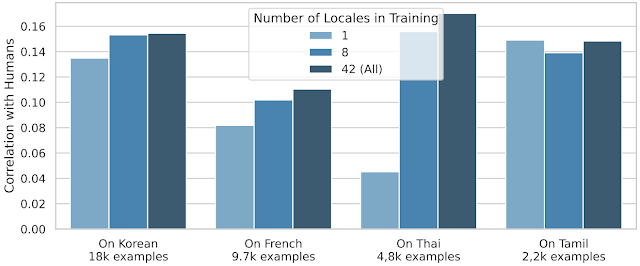

However, cross-locale switch is far more efficient for many different locales:

|

| SQuId’s efficiency on 4 chosen locales (Korean, French, Thai, and Tamil), utilizing 1, 8, and 42 locales throughout fine-tuning. For every locale, we additionally present the coaching set dimension. |

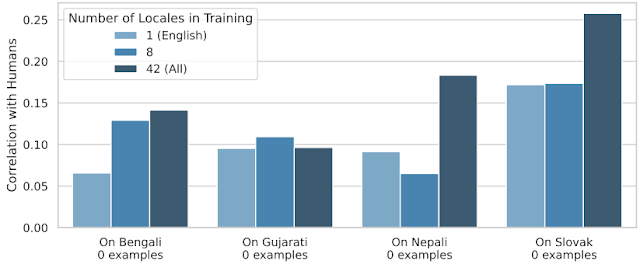

To push switch to its restrict, we held 24 locales out throughout coaching and used them for testing completely. Thus, we measure to what extent SQuId can deal with languages that it has by no means seen earlier than. The plot under exhibits that though the impact shouldn’t be uniform, cross-locale switch works.

|

| SQuId’s efficiency on 4 “zero-shot” locales; utilizing 1, 8, and 42 locales throughout fine-tuning. |

When does cross-locale function, and the way? We current many extra ablations in the paper, and present that whereas language similarity performs a job (e.g., coaching on Brazilian Portuguese helps European Portuguese) it’s surprisingly removed from being the one issue that issues.

Conclusion and future work

We introduce SQuId, a 600M parameter regression mannequin that leverages the SQuId dataset and cross-locale studying to guage speech high quality and describe how pure it sounds. We reveal that SQuId can complement human raters in the analysis of many languages. Future work consists of accuracy enhancements, increasing the vary of languages coated, and tackling new error sorts.

Acknowledgements

The writer of this put up is now a part of Google DeepMind. Many due to all authors of the paper: Ankur Bapna, Joshua Camp, Diana Mackinnon, Ankur P. Parikh, and Jason Riesa.