Many individuals with questions on grammar flip to Google Search for steering. While current options, resembling “Did you mean”, already deal with easy typo corrections, extra advanced grammatical error correction (GEC) is past their scope. What makes the event of latest Google Search options difficult is that they will need to have excessive precision and recall whereas outputting outcomes shortly.

The typical method to GEC is to deal with it as a translation drawback and use autoregressive Transformer fashions to decode the response token-by-token, conditioning on the beforehand generated tokens. However, though Transformer fashions have confirmed to be efficient at GEC, they aren’t significantly environment friendly as a result of the technology can’t be parallelized resulting from autoregressive decoding. Often, only some modifications are wanted to make the enter textual content grammatically appropriate, so one other attainable answer is to deal with GEC as a textual content enhancing drawback. If we may run the autoregressive decoder solely to generate the modifications, that will considerably lower the latency of the GEC mannequin.

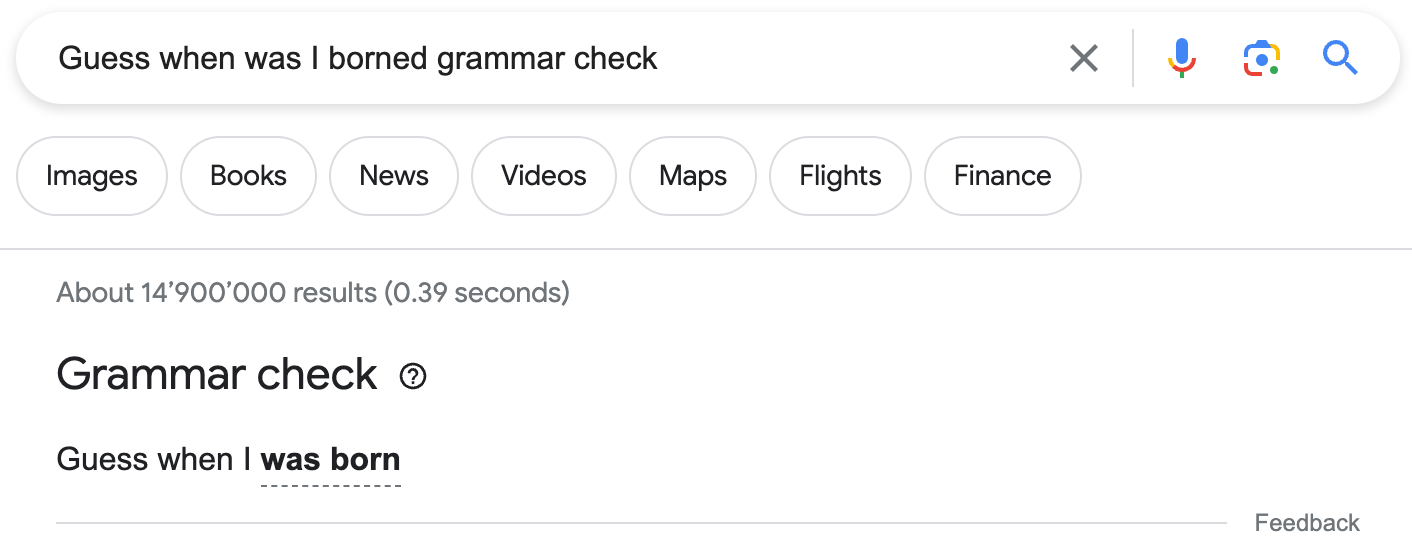

To this finish, in “EdiT5: Semi-Autoregressive Text-Editing with T5 Warm-Start”, revealed at Findings of EMNLP 2022, we describe a novel text-editing mannequin that’s based mostly on the T5 Transformer encoder-decoder structure. EdiT5 powers the brand new Google Search grammar test function that means that you can test if a phrase or sentence is grammatically appropriate and gives corrections when wanted. Grammar test exhibits up when the phrase “grammar test” is included in a search question, and if the underlying mannequin is assured concerning the correction. Additionally, it exhibits up for some queries that don’t comprise the “grammar check” phrase when Search understands that’s the seemingly intent.

|

Model structure

For low-latency purposes at Google, Transformer fashions are sometimes run on TPUs. Due to their quick matrix multiplication models (MMUs), these gadgets are optimized for performing giant matrix multiplications shortly, for instance working a Transformer encoder on tons of of tokens in only some milliseconds. In distinction, Transformer decoding makes poor use of a TPU’s capabilities, as a result of it forces it to course of just one token at a time. This makes autoregressive decoding probably the most time-consuming a part of a translation-based GEC mannequin.

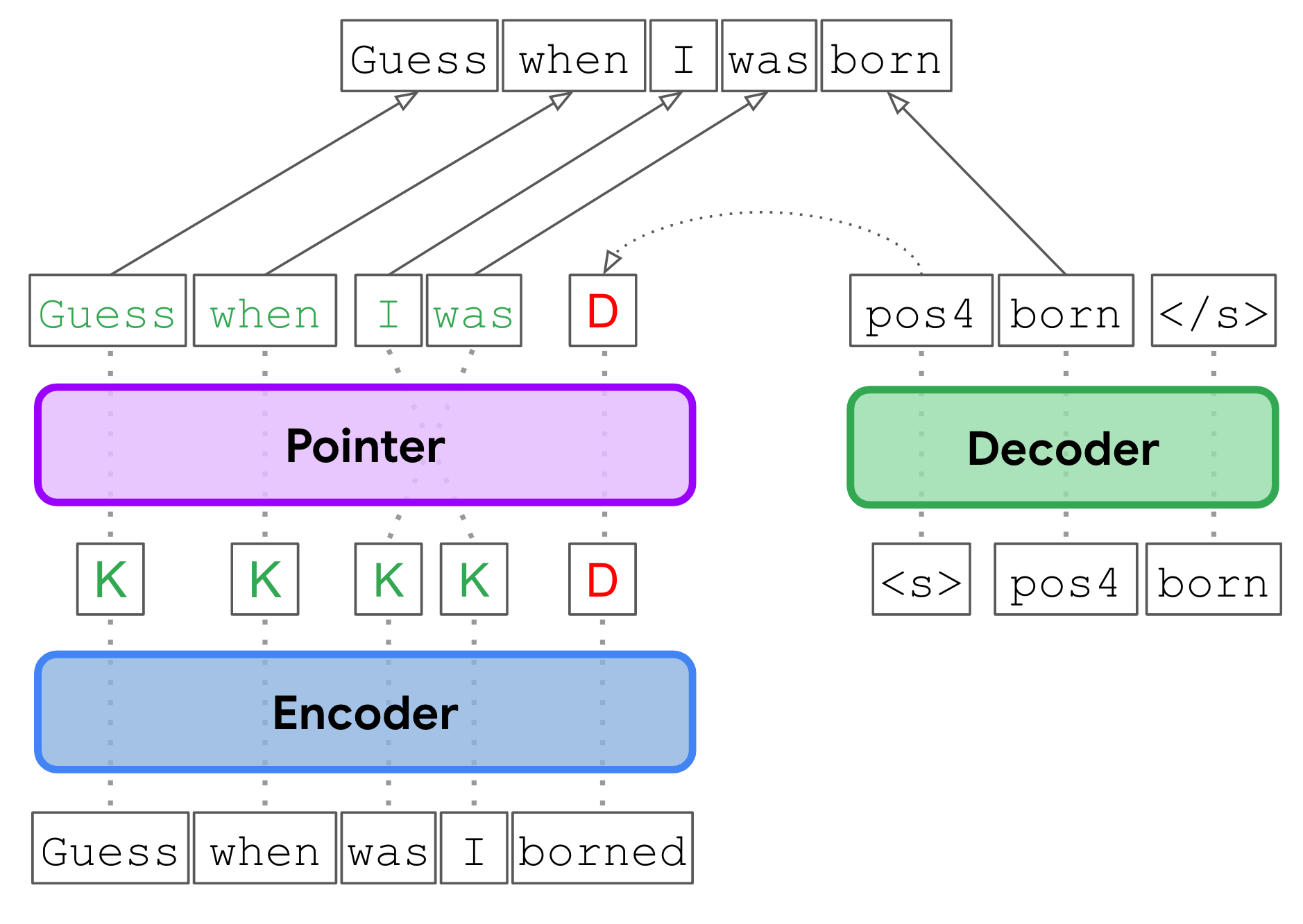

In the EdiT5 method, we cut back the variety of decoding steps by treating GEC as a textual content enhancing drawback. The EdiT5 text-editing mannequin is predicated on the T5 Transformer encoder-decoder structure with a couple of essential modifications. Given an enter with grammatical errors, the EdiT5 mannequin makes use of an encoder to find out which enter tokens to maintain or delete. The stored enter tokens type a draft output, which is optionally reordered utilizing a non-autoregressive pointer community. Finally, a decoder outputs the tokens which can be lacking from the draft, and makes use of a pointing mechanism to point the place every new token needs to be positioned to generate a grammatically appropriate output. The decoder is simply run to provide tokens that had been lacking within the draft, and in consequence, runs for a lot fewer steps than could be wanted within the translation method to GEC.

To additional lower the decoder latency, we cut back the decoder all the way down to a single layer, and we compensate by rising the dimensions of the encoder. Overall, this decreases latency considerably as a result of the additional work within the encoder is effectively parallelized.

|

| Given an enter with grammatical errors (“Guess when was I borned”), the EdiT5 mannequin makes use of an encoder to find out which enter tokens to maintain (Okay) or delete (D), a pointer community (pointer) to reorder stored tokens, and a decoder to insert any new tokens which can be wanted to generate a grammatically appropriate output. |

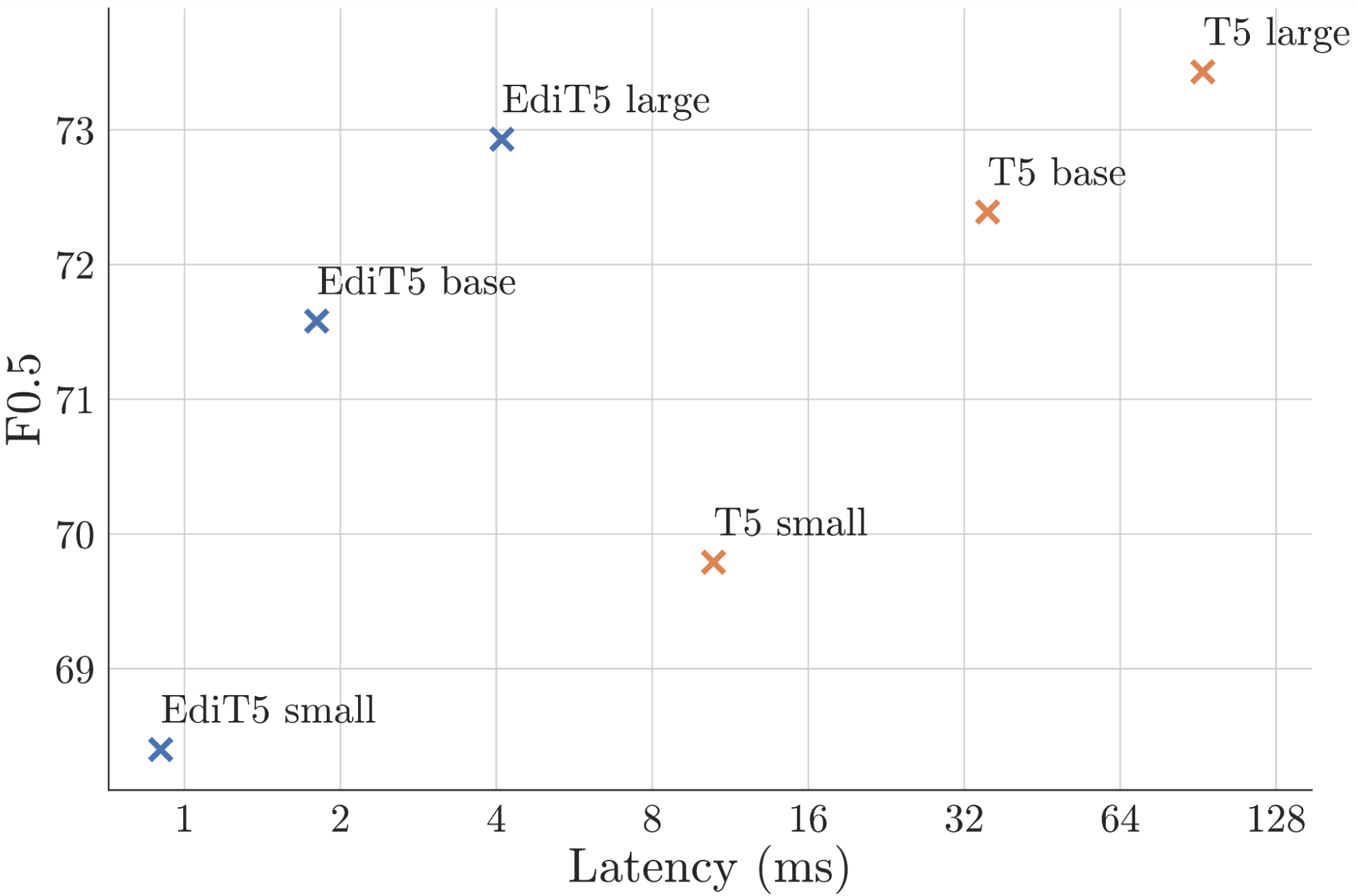

We utilized the EdiT5 mannequin to the general public BEA grammatical error correction benchmark, evaluating completely different mannequin sizes. The experimental outcomes present that an EdiT5 giant mannequin with 391M parameters yields the next F0.5 rating, which measures the accuracy of the corrections, whereas delivering a 9x speedup in comparison with a T5 base mannequin with 248M parameters. The imply latency of the EdiT5 mannequin was merely 4.1 milliseconds.

|

| Performance of the T5 and EdiT5 fashions of assorted sizes on the general public BEA GEC benchmark plotted towards imply latency. Compared to T5, EdiT5 gives a greater latency-F0.5 trade-off. Note that the x axis is logarithmic. |

Improved coaching knowledge with giant language fashions

Our earlier analysis, in addition to the outcomes above, present that mannequin measurement performs an important position in producing correct grammatical corrections. To mix some great benefits of giant language fashions (LLMs) and the low latency of EdiT5, we leverage a method known as onerous distillation. First, we practice a trainer LLM utilizing comparable datasets used for the Gboard grammar mannequin. The trainer mannequin is then used to generate coaching knowledge for the scholar EdiT5 mannequin.

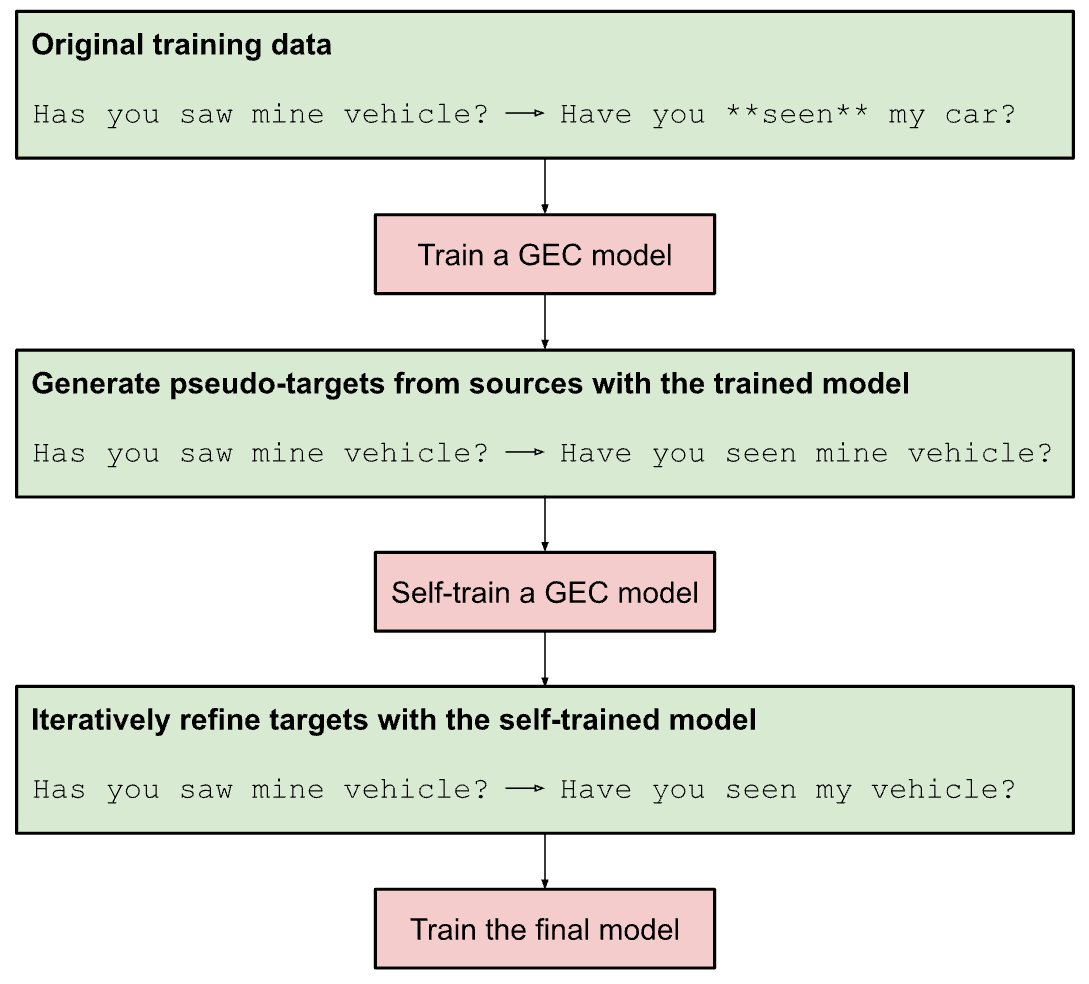

Training units for grammar fashions encompass ungrammatical supply / grammatical goal sentence pairs. Some of the coaching units have noisy targets that comprise grammatical errors, pointless paraphrasing, or undesirable artifacts. Therefore, we generate new pseudo-targets with the trainer mannequin to get cleaner and extra constant coaching knowledge. Then, we re-train the trainer mannequin with the pseudo-targets utilizing a method known as self-training. Finally, we discovered that when the supply sentence incorporates many errors, the trainer typically corrects solely a part of the errors. Thus, we will additional enhance the standard of the pseudo-targets by feeding them to the trainer LLM for a second time, a method known as iterative refinement.

|

| Steps for coaching a big trainer mannequin for grammatical error correction (GEC). Self-training and iterative refinement take away pointless paraphrasing, artifacts, and grammatical errors showing within the authentic targets. |

Putting all of it collectively

Using the improved GEC knowledge, we practice two EdiT5-based fashions: a grammatical error correction mannequin, and a grammaticality classifier. When the grammar test function is used, we run the question first via the correction mannequin, after which we test if the output is certainly appropriate with the classifier mannequin. Only then can we floor the correction to the person.

The purpose to have a separate classifier mannequin is to extra simply commerce off between precision and recall. Additionally, for ambiguous or nonsensical queries to the mannequin the place the most effective correction is unclear, the classifier reduces the chance of serving inaccurate or complicated corrections.

Conclusion

We have developed an environment friendly grammar correction mannequin based mostly on the state-of-the-art EdiT5 mannequin structure. This mannequin permits customers to test for the grammaticality of their queries in Google Search by together with the “grammar check” phrase within the question.

Acknowledgements

We gratefully acknowledge the important thing contributions of the opposite staff members, together with Akash R, Aliaksei Severyn, Harsh Shah, Jonathan Mallinson, Mithun Kumar S R, Samer Hassan, Sebastian Krause, and Shikhar Thakur. We’d additionally wish to thank Felix Stahlberg, Shankar Kumar, and Simon Tong for useful discussions and pointers.