In this tutorial, we show how we use Ibis to construct a transportable, in-database characteristic engineering pipeline that appears and looks like Pandas however executes completely contained in the database. We present how we join to DuckDB, register knowledge safely contained in the backend, and outline advanced transformations utilizing window features and aggregations with out ever pulling uncooked knowledge into native reminiscence. By protecting all transformations lazy and backend-agnostic, we show how to write analytics code as soon as in Python and depend on Ibis to translate it into environment friendly SQL. Check out the FULL CODES right here.

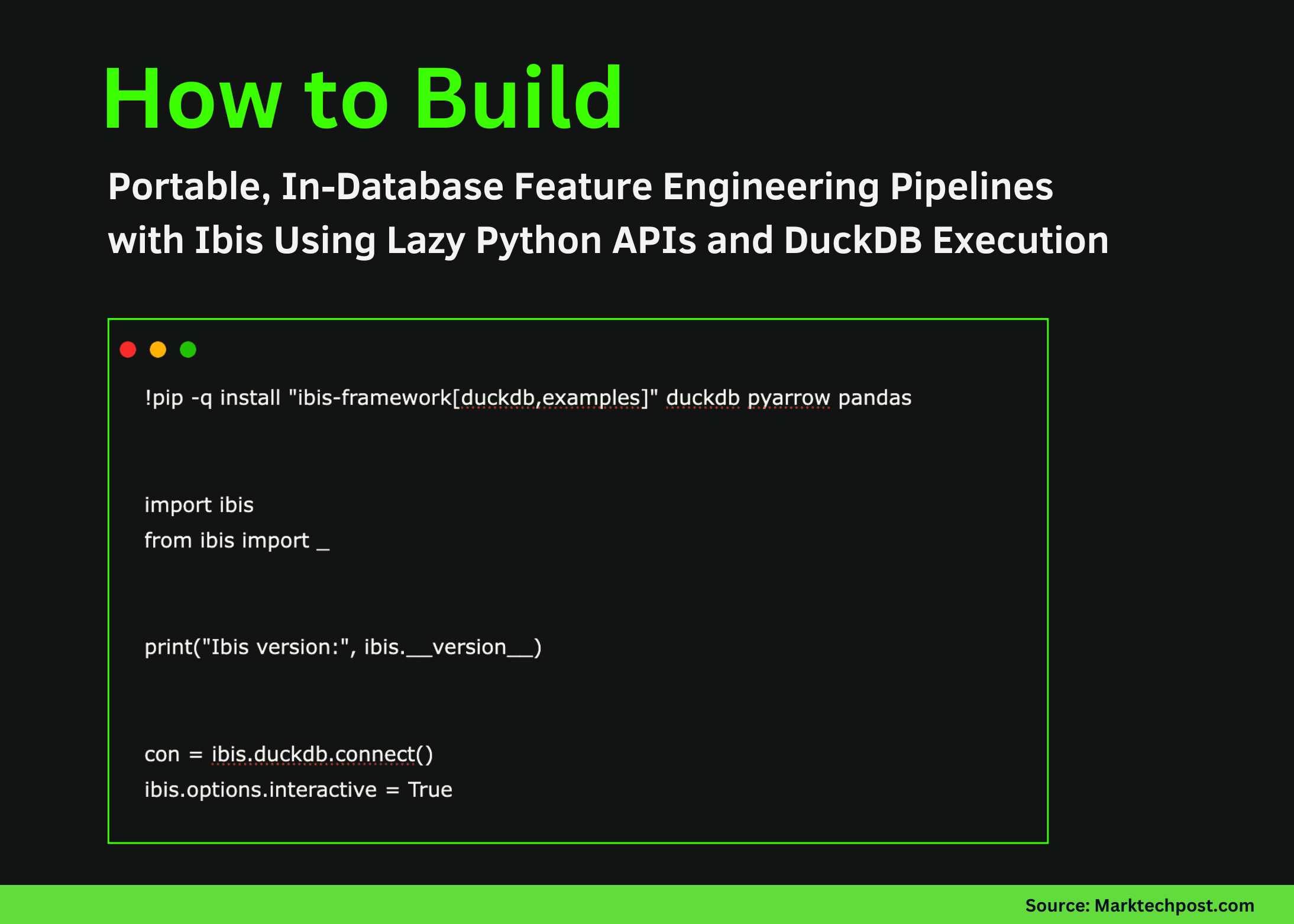

!pip -q set up "ibis-framework[duckdb,examples]" duckdb pyarrow pandas

import ibis

from ibis import _

print("Ibis model:", ibis.__version__)

con = ibis.duckdb.join()

ibis.choices.interactive = TrueWe set up the required libraries and initialize the Ibis surroundings. We set up a DuckDB connection and allow interactive execution so that each one subsequent operations stay lazy and backend-driven. Check out the FULL CODES right here.

attempt:

base_expr = ibis.examples.penguins.fetch(backend=con)

besides TypeError:

base_expr = ibis.examples.penguins.fetch()

if "penguins" not in con.list_tables():

attempt:

con.create_table("penguins", base_expr, overwrite=True)

besides Exception:

con.create_table("penguins", base_expr.execute(), overwrite=True)

t = con.desk("penguins")

print(t.schema())We load the Penguins dataset and explicitly register it contained in the DuckDB catalog to guarantee it’s obtainable for SQL execution. We confirm the desk schema and verify that the information now lives contained in the database somewhat than in native reminiscence. Check out the FULL CODES right here.

def penguin_feature_pipeline(penguins):

base = penguins.mutate(

bill_ratio=_.bill_length_mm / _.bill_depth_mm,

is_male=(_.intercourse == "male").ifelse(1, 0),

)

cleaned = base.filter(

_.bill_length_mm.notnull()

& _.bill_depth_mm.notnull()

& _.body_mass_g.notnull()

& _.flipper_length_mm.notnull()

& _.species.notnull()

& _.island.notnull()

& _.12 months.notnull()

)

w_species = ibis.window(group_by=[cleaned.species])

w_island_year = ibis.window(

group_by=[cleaned.island],

order_by=[cleaned.year],

previous=2,

following=0,

)

feat = cleaned.mutate(

species_avg_mass=cleaned.body_mass_g.imply().over(w_species),

species_std_mass=cleaned.body_mass_g.std().over(w_species),

mass_z=(

cleaned.body_mass_g

- cleaned.body_mass_g.imply().over(w_species)

) / cleaned.body_mass_g.std().over(w_species),

island_mass_rank=cleaned.body_mass_g.rank().over(

ibis.window(group_by=[cleaned.island])

),

rolling_3yr_island_avg_mass=cleaned.body_mass_g.imply().over(

w_island_year

),

)

return feat.group_by(["species", "island", "year"]).agg(

n=feat.depend(),

avg_mass=feat.body_mass_g.imply(),

avg_flipper=feat.flipper_length_mm.imply(),

avg_bill_ratio=feat.bill_ratio.imply(),

avg_mass_z=feat.mass_z.imply(),

avg_rolling_3yr_mass=feat.rolling_3yr_island_avg_mass.imply(),

pct_male=feat.is_male.imply(),

).order_by(["species", "island", "year"])We outline a reusable characteristic engineering pipeline utilizing pure Ibis expressions. We compute derived options, apply knowledge cleansing, and use window features and grouped aggregations to construct superior, database-native options whereas protecting your entire pipeline lazy. Check out the FULL CODES right here.

options = penguin_feature_pipeline(t)

print(con.compile(options))

attempt:

df = options.to_pandas()

besides Exception:

df = options.execute()

show(df.head())We invoke the characteristic pipeline and compile it into DuckDB SQL to validate that each one transformations are pushed down to the database. We then run the pipeline and return solely the ultimate aggregated outcomes for inspection. Check out the FULL CODES right here.

con.create_table("penguin_features", options, overwrite=True)

feat_tbl = con.desk("penguin_features")

attempt:

preview = feat_tbl.restrict(10).to_pandas()

besides Exception:

preview = feat_tbl.restrict(10).execute()

show(preview)

out_path = "/content material/penguin_features.parquet"

con.raw_sql(f"COPY penguin_features TO '{out_path}' (FORMAT PARQUET);")

print(out_path)We materialize the engineered options as a desk instantly inside DuckDB and question it lazily for verification. We additionally export the outcomes to a Parquet file, demonstrating how we will hand off database-computed options to downstream analytics or machine studying workflows.

In conclusion, we constructed, compiled, and executed a sophisticated characteristic engineering workflow absolutely inside DuckDB utilizing Ibis. We demonstrated how to examine the generated SQL, materialized outcomes instantly within the database, and exported them for downstream use whereas preserving portability throughout analytical backends. This strategy reinforces the core thought behind Ibis: we maintain computation shut to the information, decrease pointless knowledge motion, and keep a single, reusable Python codebase that scales from native experimentation to manufacturing databases.

Check out the FULL CODES right here. Also, be happy to observe us on Twitter and don’t overlook to be a part of our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you may be a part of us on telegram as effectively.

Check out our newest launch of ai2025.dev, a 2025-focused analytics platform that turns mannequin launches, benchmarks, and ecosystem exercise right into a structured dataset you may filter, evaluate, and export.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Artificial Intelligence for social good. His most up-to-date endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.

ztoog.com