A full-scale error-corrected quantum computer can be ready to remedy some issues which can be unimaginable for classical computer systems, however constructing such a machine is a enormous endeavor. We are happy with the milestones that we’ve got achieved towards a totally error-corrected quantum computer, however that large-scale computer continues to be some variety of years away. Meanwhile, we’re utilizing our present noisy quantum processors as versatile platforms for quantum experiments.

In distinction to an error-corrected quantum computer, experiments in noisy quantum processors are at present restricted to a few thousand quantum operations or gates, earlier than noise degrades the quantum state. In 2019 we applied a particular computational job known as random circuit sampling on our quantum processor and confirmed for the primary time that it outperformed state-of-the-art classical supercomputing.

Although they haven’t but reached beyond-classical capabilities, we’ve got additionally used our processors to observe novel bodily phenomena, equivalent to time crystals and Majorana edge modes, and have made new experimental discoveries, equivalent to strong sure states of interacting photons and the noise-resilience of Majorana edge modes of Floquet evolutions.

We anticipate that even on this intermediate, noisy regime, we are going to discover purposes for the quantum processors through which helpful quantum experiments might be carried out a lot quicker than might be calculated on classical supercomputers — we name these “computational purposes” of the quantum processors. No one has but demonstrated such a beyond-classical computational software. So as we intention to obtain this milestone, the query is: What is one of the simplest ways to compare a quantum experiment run on such a quantum processor to the computational price of a classical software?

We already understand how to compare an error-corrected quantum algorithm to a classical algorithm. In that case, the sector of computational complexity tells us that we are able to compare their respective computational prices — that’s, the variety of operations required to accomplish the duty. But with our present experimental quantum processors, the state of affairs isn’t so effectively outlined.

In “Effective quantum volume, fidelity and computational cost of noisy quantum processing experiments”, we offer a framework for measuring the computational price of a quantum experiment, introducing the experiment’s “effective quantum volume”, which is the variety of quantum operations or gates that contribute to a measurement final result. We apply this framework to consider the computational price of three current experiments: our random circuit sampling experiment, our experiment measuring portions often called “out of time order correlators” (OTOCs), and a current experiment on a Floquet evolution associated to the Ising mannequin. We are significantly enthusiastic about OTOCs as a result of they supply a direct method to experimentally measure the efficient quantum quantity of a circuit (a sequence of quantum gates or operations), which is itself a computationally troublesome job for a classical computer to estimate exactly. OTOCs are additionally necessary in nuclear magnetic resonance and electron spin resonance spectroscopy. Therefore, we imagine that OTOC experiments are a promising candidate for a first-ever computational software of quantum processors.

|

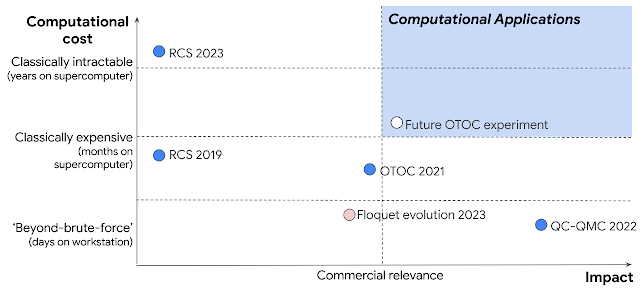

| Plot of computational price and affect of some current quantum experiments. While some (e.g., QC-QMC 2022) have had excessive affect and others (e.g., RCS 2023) have had excessive computational price, none have but been each helpful and onerous sufficient to be thought-about a “computational application.” We hypothesize that our future OTOC experiment might be the primary to move this threshold. Other experiments plotted are referenced within the textual content. |

Random circuit sampling: Evaluating the computational price of a noisy circuit

When it comes to operating a quantum circuit on a noisy quantum processor, there are two competing concerns. On one hand, we intention to do one thing that’s troublesome to obtain classically. The computational price — the variety of operations required to accomplish the duty on a classical computer — will depend on the quantum circuit’s efficient quantum quantity: the bigger the amount, the upper the computational price, and the extra a quantum processor can outperform a classical one.

But alternatively, on a noisy processor, every quantum gate can introduce an error to the calculation. The extra operations, the upper the error, and the decrease the constancy of the quantum circuit in measuring a amount of curiosity. Under this consideration, we would desire easier circuits with a smaller efficient quantity, however these are simply simulated by classical computer systems. The stability of those competing concerns, which we wish to maximize, known as the “computational useful resource”, proven under.

|

| Graph of the tradeoff between quantum quantity and noise in a quantum circuit, captured in a amount known as the “computational resource.” For a noisy quantum circuit, it will initially improve with the computational price, however finally, noise will overrun the circuit and trigger it to lower. |

We can see how these competing concerns play out in a easy “hello world” program for quantum processors, often called random circuit sampling (RCS), which was the primary demonstration of a quantum processor outperforming a classical computer. Any error in any gate is probably going to make this experiment fail. Inevitably, that is a onerous experiment to obtain with vital constancy, and thus it additionally serves as a benchmark of system constancy. But it additionally corresponds to the very best recognized computational price achievable by a quantum processor. We just lately reported essentially the most highly effective RCS experiment carried out to date, with a low measured experimental constancy of 1.7×10-3, and a excessive theoretical computational price of ~1023. These quantum circuits had 700 two-qubit gates. We estimate that this experiment would take ~47 years to simulate on this planet’s largest supercomputer. While this checks one of many two bins wanted for a computational software — it outperforms a classical supercomputer — it isn’t a significantly helpful software per se.

OTOCs and Floquet evolution: The efficient quantum quantity of a native observable

There are many open questions in quantum many-body physics which can be classically intractable, so operating a few of these experiments on our quantum processor has nice potential. We usually consider these experiments a bit in another way than we do the RCS experiment. Rather than measuring the quantum state of all qubits on the finish of the experiment, we’re often involved with extra particular, native bodily observables. Because not each operation within the circuit essentially impacts the observable, a native observable’s efficient quantum quantity is perhaps smaller than that of the total circuit wanted to run the experiment.

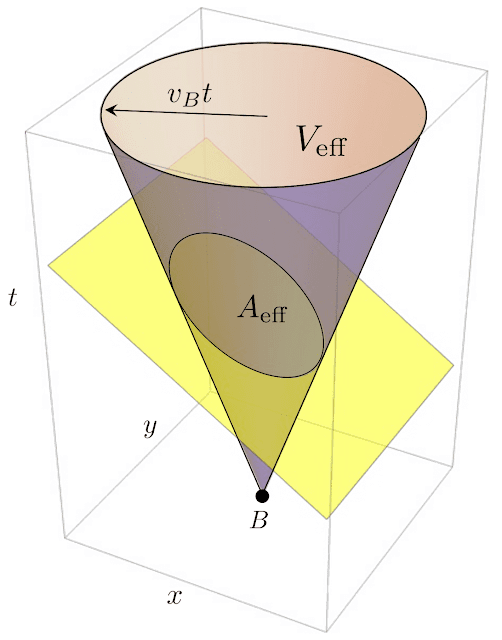

We can perceive this by making use of the idea of a gentle cone from relativity, which determines which occasions in space-time might be causally related: some occasions can’t probably affect each other as a result of info takes time to propagate between them. We say that two such occasions are outdoors their respective gentle cones. In a quantum experiment, we substitute the sunshine cone with one thing known as a “butterfly cone,” the place the expansion of the cone is decided by the butterfly velocity — the velocity with which info spreads all through the system. (This velocity is characterised by measuring OTOCs, mentioned later.) The efficient quantum quantity of a native observable is actually the amount of the butterfly cone, together with solely the quantum operations which can be causally related to the observable. So, the quicker info spreads in a system, the bigger the efficient quantity and subsequently the more durable it’s to simulate classically.

|

| An outline of the efficient quantity Veff of the gates contributing to the native observable B. A associated amount known as the efficient space Aeff is represented by the cross-section of the aircraft and the cone. The perimeter of the bottom corresponds to the entrance of data journey that strikes with the butterfly velocity vB. |

We apply this framework to a current experiment implementing a so-called Floquet Ising mannequin, a bodily mannequin associated to the time crystal and Majorana experiments. From the info of this experiment, one can straight estimate an efficient constancy of 0.37 for the biggest circuits. With the measured gate error price of ~1%, this provides an estimated efficient quantity of ~100. This is far smaller than the sunshine cone, which included two thousand gates on 127 qubits. So, the butterfly velocity of this experiment is sort of small. Indeed, we argue that the efficient quantity covers solely ~28 qubits, not 127, utilizing numerical simulations that acquire a bigger precision than the experiment. This small efficient quantity has additionally been corroborated with the OTOC method. Although this was a deep circuit, the estimated computational price is 5×1011, virtually one trillion occasions lower than the current RCS experiment. Correspondingly, this experiment might be simulated in lower than a second per knowledge level on a single A100 GPU. So, whereas that is actually a helpful software, it doesn’t fulfill the second requirement of a computational software: considerably outperforming a classical simulation.

Information scrambling experiments with OTOCs are a promising avenue for a computational software. OTOCs can inform us necessary bodily details about a system, such because the butterfly velocity, which is essential for exactly measuring the efficient quantum quantity of a circuit. OTOC experiments with quick entangling gates provide a potential path for a first beyond-classical demonstration of a computational software with a quantum processor. Indeed, in our experiment from 2021 we achieved an efficient constancy of Feff ~ 0.06 with an experimental signal-to-noise ratio of ~1, corresponding to an efficient quantity of ~250 gates and a computational price of 2×1012.

While these early OTOC experiments are usually not sufficiently complicated to outperform classical simulations, there’s a deep bodily cause why OTOC experiments are good candidates for the primary demonstration of a computational software. Most of the fascinating quantum phenomena accessible to near-term quantum processors which can be onerous to simulate classically correspond to a quantum circuit exploring many, many quantum power ranges. Such evolutions are usually chaotic and customary time-order correlators (TOC) decay in a short time to a purely random common on this regime. There isn’t any experimental sign left. This doesn’t occur for OTOC measurements, which permits us to develop complexity at will, solely restricted by the error per gate. We anticipate that a discount of the error price by half would double the computational price, pushing this experiment to the beyond-classical regime.

Conclusion

Using the efficient quantum quantity framework we’ve got developed, we’ve got decided the computational price of our RCS and OTOC experiments, in addition to a current Floquet evolution experiment. While none of those meet the necessities but for a computational software, we anticipate that with improved error charges, an OTOC experiment would be the first beyond-classical, helpful software of a quantum processor.