Since 2017, in what spare time I have (ha!), I assist my colleague Eric Berger host his Houston-area climate forecasting web site, Space City Weather. It’s an attention-grabbing internet hosting problem—on a typical day, SCW does possibly 20,000–30,000 web page views to 10,000–15,000 distinctive guests, which is a comparatively simple load to deal with with minimal work. But when extreme climate occasions occur—particularly in the summertime, when hurricanes lurk within the Gulf of Mexico—the positioning’s site visitors can spike to greater than 1,000,000 web page views in 12 hours. That degree of site visitors requires a bit extra prep to deal with.

Lee Hutchinson

For a really very long time, I ran SCW on a backend stack made up of HAProxy for SSL termination, Varnish Cache for on-box caching, and Nginx for the precise internet server utility—all fronted by Cloudflare to take up nearly all of the load. (I wrote about this setup at size on Ars a couple of years in the past for people who need some extra in-depth particulars.) This stack was totally battle-tested and prepared to devour no matter site visitors we threw at it, however it was additionally annoyingly advanced, with a number of cache layers to cope with, and that complexity made troubleshooting points harder than I would have appreciated.

So throughout some winter downtime two years in the past, I took the chance to jettison some complexity and cut back the internet hosting stack down to a single monolithic internet server utility: OpenLiteSpeed.

Out with the previous, in with the brand new

I didn’t know an excessive amount of about OpenLiteSpeed (“OLS” to its buddies) apart from that it is talked about a bunch in discussions about WordPress internet hosting—and since SCW runs WordPress, I began to get . OLS appeared to get a variety of reward for its built-in caching, particularly when WordPress was concerned; it was purported to be fairly fast in contrast to Nginx; and, frankly, after five-ish years of admining the identical stack, I was fascinated by altering issues up. OpenLiteSpeed it was!

Lee Hutchinson

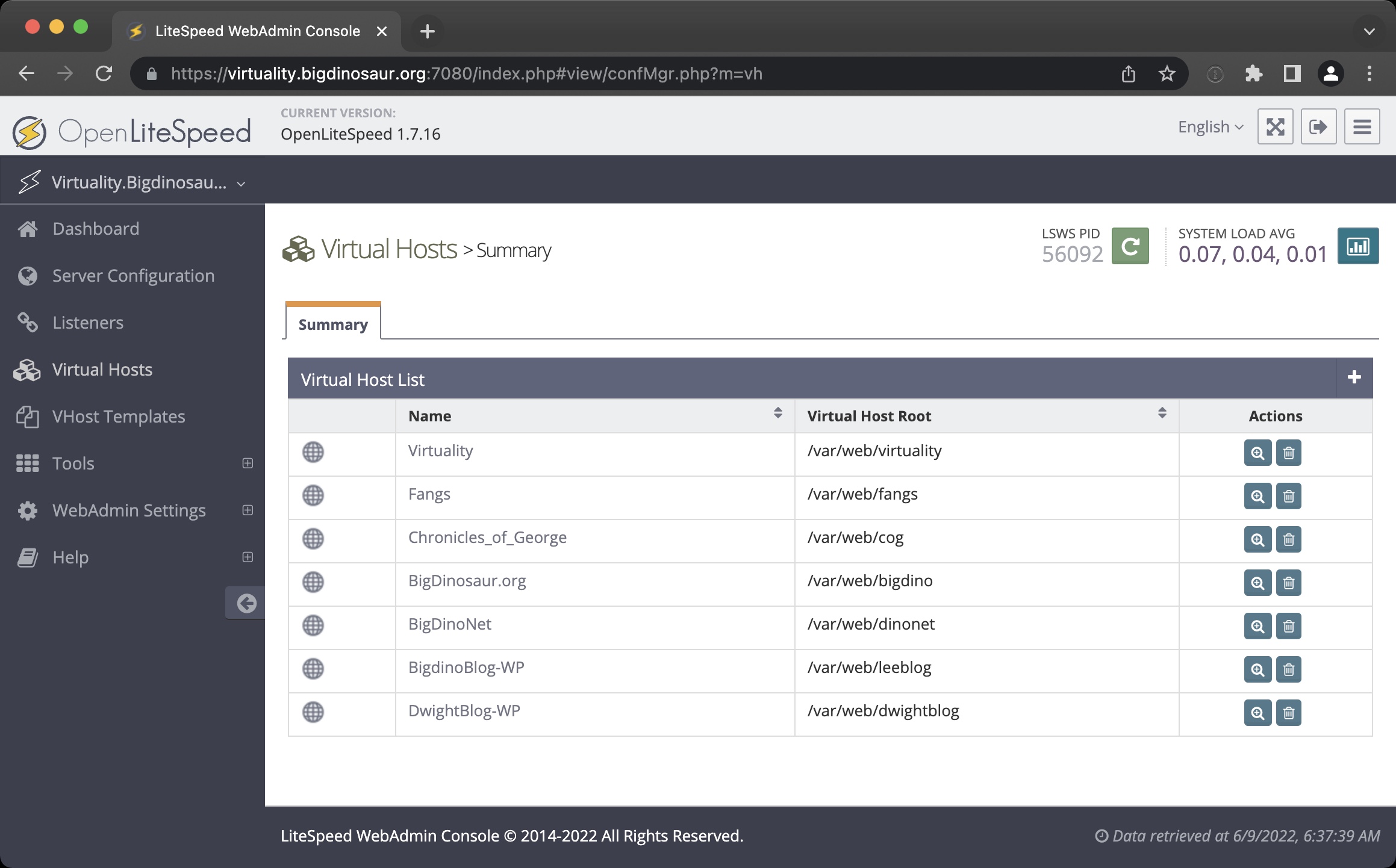

The first important adjustment to cope with was that OLS is primarily configured by an precise GUI, with all of the annoying potential points that brings with it (one other port to safe, one other password to handle, one other public level of entry into the backend, extra PHP assets devoted simply to the admin interface). But the GUI was quick, and it largely uncovered the settings that wanted exposing. Translating the prevailing Nginx WordPress configuration into OLS-speak was a good acclimation train, and I ultimately settled on Cloudflare tunnels as a suitable methodology for retaining the admin console hidden away and notionally safe.

Lee Hutchinson

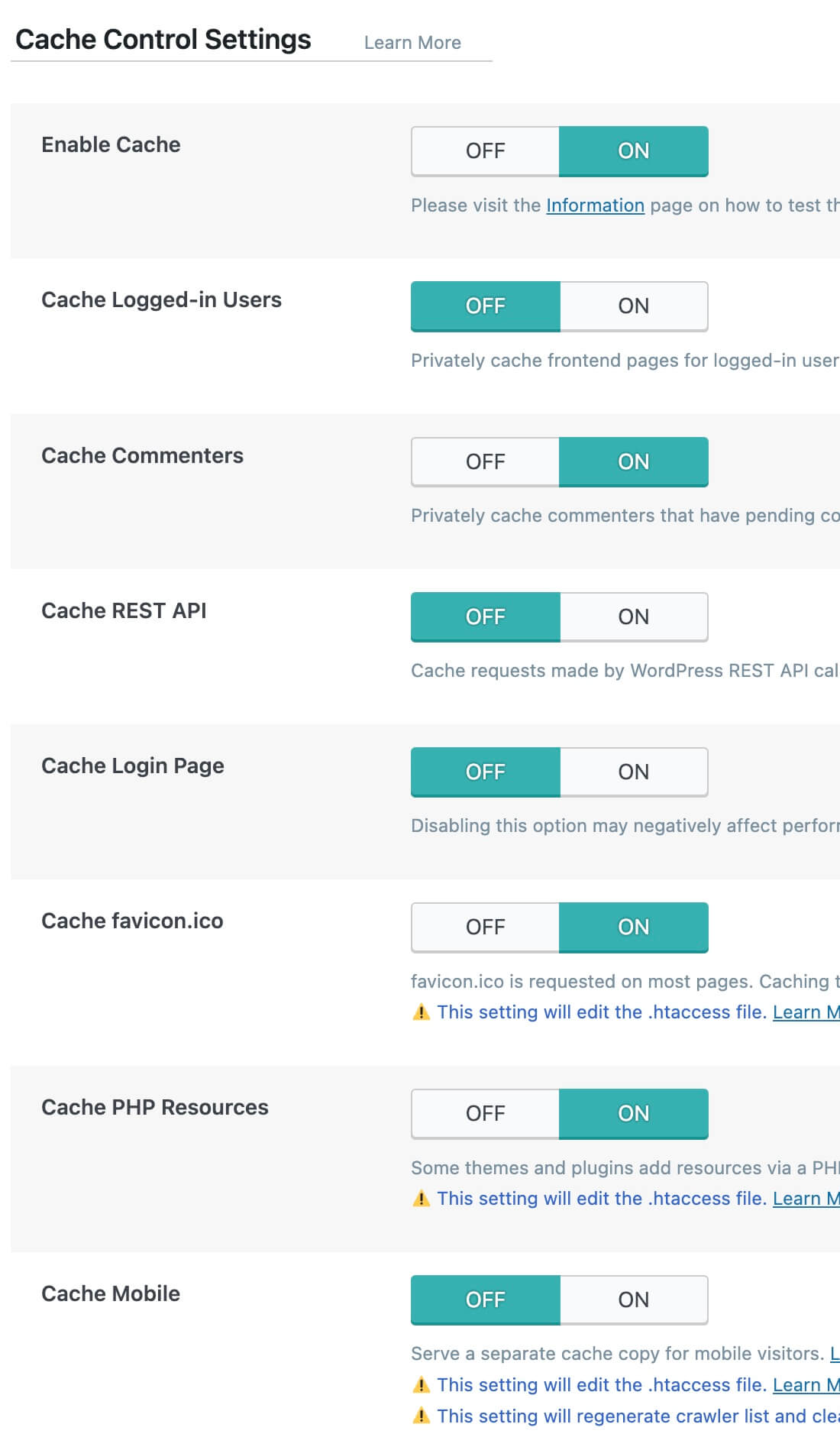

The different main adjustment was the OLS LiteSpeed Cache plugin for WordPress, which is the first software one makes use of to configure how WordPress itself interacts with OLS and its built-in cache. It’s a large plugin with pages and pages of configurable choices, lots of that are involved with driving utilization of the Quic.Cloud CDN service (which is operated by LiteSpeed Technology, the corporate that created OpenLiteSpeed and its for-pay sibling, LiteSpeed).

Getting probably the most out of WordPress on OLS meant spending a while within the plugin, determining which of the choices would assist and which might damage. (Perhaps unsurprisingly, there are many methods in there to get oneself into silly quantities of bother by being too aggressive with caching.) Fortunately, Space City Weather offers a terrific testing floor for internet servers, being a properly energetic web site with a really cache-friendly workload, and so I hammered out a beginning configuration with which I was moderately completely satisfied and, whereas talking the traditional holy phrases of formality, flipped the cutover change. HAProxy, Varnish, and Nginx went silent, and OLS took up the load.