Automatic speech recognition (ASR) is a well-established expertise that’s extensively adopted for varied functions comparable to convention calls, streamed video transcription and voice instructions. While the challenges for this expertise are centered round noisy audio inputs, the visible stream in multimodal movies (e.g., TV, on-line edited movies) can present sturdy cues for bettering the robustness of ASR programs — that is known as audiovisual ASR (AV-ASR).

Although lip movement can present sturdy indicators for speech recognition and is the commonest space of focus for AV-ASR, the mouth is usually in a roundabout way seen in movies within the wild (e.g., attributable to selfish viewpoints, face coverings, and low decision) and due to this fact, a brand new rising space of analysis is unconstrained AV-ASR (e.g., AVATAR), which investigates the contribution of total visible frames, and never simply the mouth area.

Building audiovisual datasets for coaching AV-ASR models, nonetheless, is difficult. Datasets comparable to How2 and VisSpeech have been created from educational movies on-line, however they’re small in measurement. In distinction, the models themselves are sometimes giant and encompass each visible and audio encoders, and they also are likely to overfit on these small datasets. Nonetheless, there have been various not too long ago launched large-scale audio-only models which are closely optimized through large-scale coaching on large audio-only information obtained from audio books, comparable to LibriLight and LibriSpeech. These models comprise billions of parameters, are available, and present sturdy generalization throughout domains.

With the above challenges in thoughts, in “AVFormer: Injecting Vision into Frozen Speech Models for Zero-Shot AV-ASR”, we current a easy technique for augmenting current large-scale audio-only models with visible info, on the identical time performing light-weight area adaptation. AVFormer injects visible embeddings into a frozen ASR mannequin (much like how Flamingo injects visible info into giant language models for vision-text duties) utilizing light-weight trainable adaptors that may be skilled on a small quantity of weakly labeled video information with minimal extra coaching time and parameters. We additionally introduce a easy curriculum scheme throughout coaching, which we present is essential to allow the mannequin to collectively course of audio and visible info successfully. The ensuing AVFormer mannequin achieves state-of-the-art zero-shot efficiency on three totally different AV-ASR benchmarks (How2, VisSpeech and Ego4D), whereas additionally crucially preserving first rate efficiency on conventional audio-only speech recognition benchmarks (i.e., LibriSpeech).

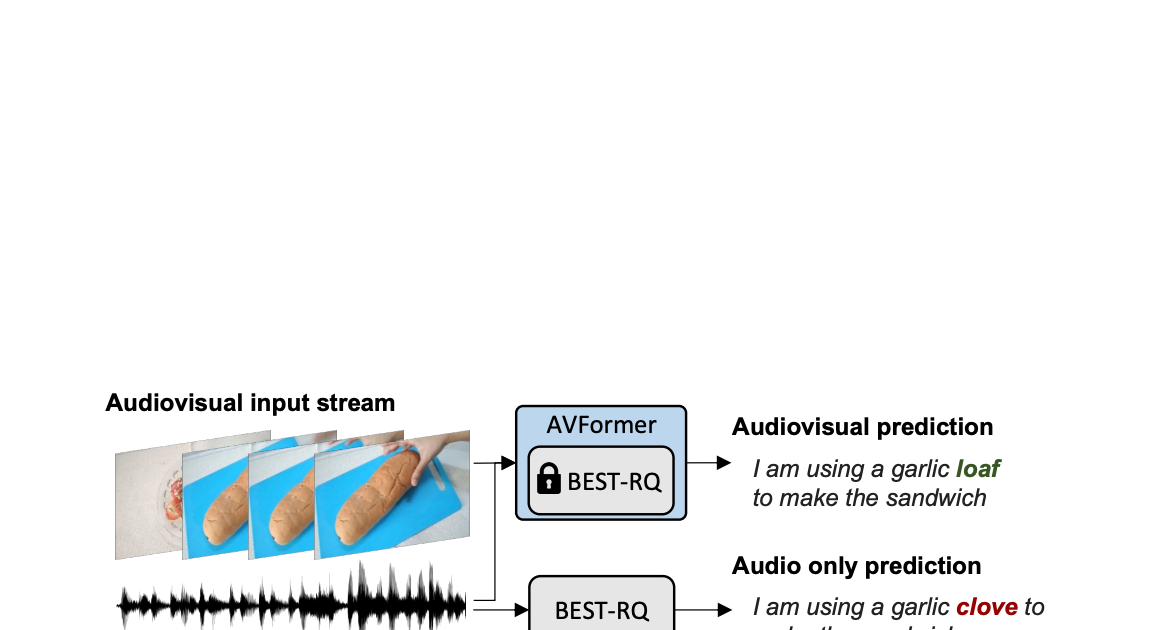

|

| Unconstrained audiovisual speech recognition. We inject vision into a frozen speech mannequin (BEST-RQ, in gray) for zero-shot audiovisual ASR through light-weight modules to create a parameter- and data-efficient mannequin known as AVFormer (blue). The visible context can present useful clues for strong speech recognition particularly when the audio sign is noisy (the visible loaf of bread helps right the audio-only mistake “clove” to “loaf” within the generated transcript). |

Injecting vision utilizing light-weight modules

Our purpose is so as to add visible understanding capabilities to an current audio-only ASR mannequin whereas sustaining its generalization efficiency to numerous domains (each AV and audio-only domains).

To obtain this, we increase an current state-of-the-art ASR mannequin (Best-RQ) with the next two elements: (i) linear visible projector and (ii) light-weight adapters. The former initiatives visible options within the audio token embedding house. This course of permits the mannequin to correctly join individually pre-trained visible function and audio enter token representations. The latter then minimally modifies the mannequin so as to add understanding of multimodal inputs from movies. We then practice these extra modules on unlabeled internet movies from the HowTo100M dataset, together with the outputs of an ASR mannequin as pseudo floor reality, whereas conserving the remainder of the Best-RQ mannequin frozen. Such light-weight modules allow data-efficiency and robust generalization of efficiency.

We evaluated our prolonged mannequin on AV-ASR benchmarks in a zero-shot setting, the place the mannequin is rarely skilled on a manually annotated AV-ASR dataset.

Curriculum studying for vision injection

After the preliminary analysis, we found empirically that with a naïve single spherical of joint coaching, the mannequin struggles to study each the adapters and the visible projectors in a single go. To mitigate this situation, we launched a two-phase curriculum studying technique that decouples these two elements — area adaptation and visible function integration — and trains the community in a sequential method. In the primary section, the adapter parameters are optimized with out feeding visible tokens in any respect. Once the adapters are skilled, we add the visible tokens and practice the visible projection layers alone within the second section whereas the skilled adapters are saved frozen.

The first stage focuses on audio area adaptation. By the second section, the adapters are fully frozen and the visible projector should merely study to generate visible prompts that challenge the visible tokens into the audio house. In this manner, our curriculum studying technique permits the mannequin to include visible inputs in addition to adapt to new audio domains in AV-ASR benchmarks. We apply every section simply as soon as, as an iterative utility of alternating phases results in efficiency degradation.

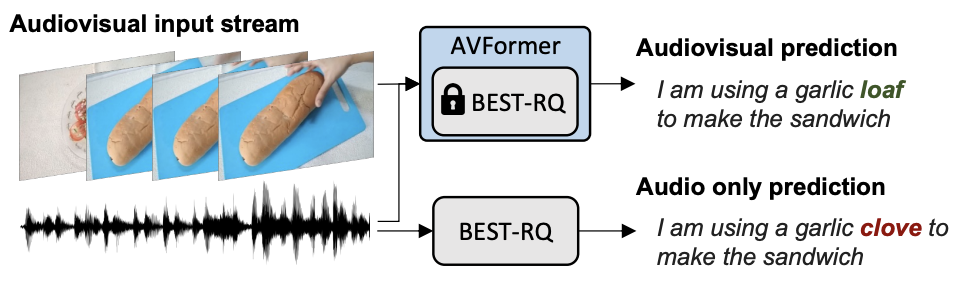

|

| Overall structure and coaching process for AVFormer. The structure consists of a frozen Conformer encoder-decoder mannequin, and a frozen CLIP encoder (frozen layers proven in grey with a lock image), along side two light-weight trainable modules – (i) visible projection layer (orange) and bottleneck adapters (blue) to allow multimodal area adaptation. We suggest a two-phase curriculum studying technique: the adapters (blue) are first skilled with none visible tokens, after which the visible projection layer (orange) is tuned whereas all the opposite elements are saved frozen. |

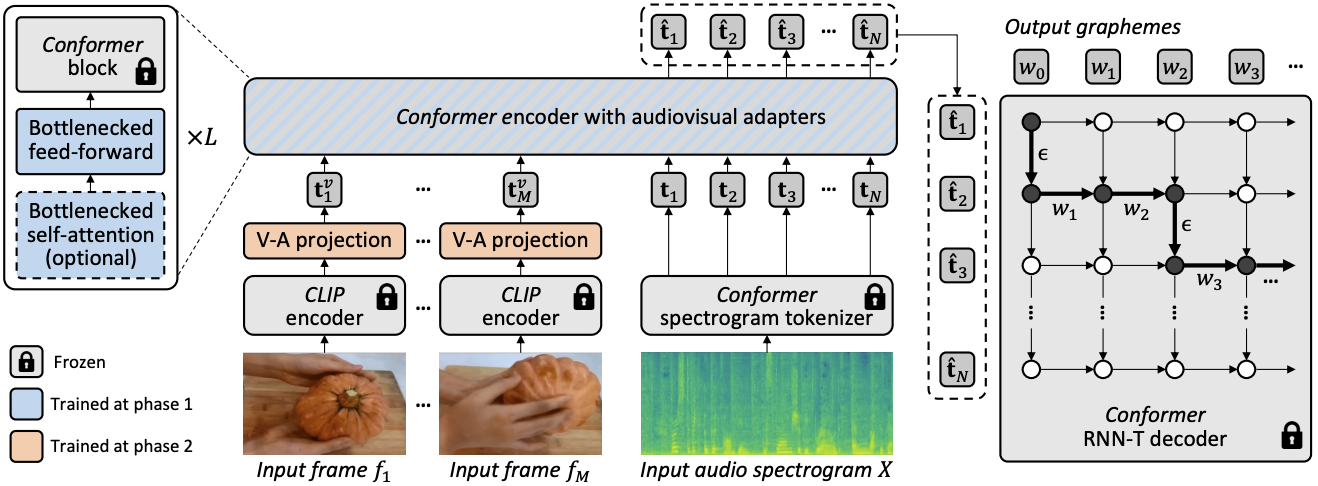

The plots beneath present that with out curriculum studying, our AV-ASR mannequin is worse than the audio-only baseline throughout all datasets, with the hole rising as extra visible tokens are added. In distinction, when the proposed two-phase curriculum is utilized, our AV-ASR mannequin performs considerably higher than the baseline audio-only mannequin.

|

| Effects of curriculum studying. Red and blue traces are for audiovisual models and are proven on 3 datasets within the zero-shot setting (decrease WER % is healthier). Using the curriculum helps on all 3 datasets (for How2 (a) and Ego4D (c) it’s essential for outperforming audio-only efficiency). Performance improves up till 4 visible tokens, at which level it saturates. |

Results in zero-shot AV-ASR

We evaluate AVFormer to BEST-RQ, the audio model of our mannequin, and AVATAR, the state-of-the-art in AV-ASR, for zero-shot efficiency on the three AV-ASR benchmarks: How2, VisSpeech and Ego4D. AVFormer outperforms AVATAR and BEST-RQ on all, even outperforming each AVATAR and BEST-RQ when they’re skilled on LibriSpeech and the total set of HowTo100M. This is notable as a result of for BEST-RQ, this includes coaching 600M parameters, whereas AVFormer solely trains 4M parameters and due to this fact requires solely a small fraction of the coaching dataset (5% of HowTo100M). Moreover, we additionally consider efficiency on LibriSpeech, which is audio-only, and AVFormer outperforms each baselines.

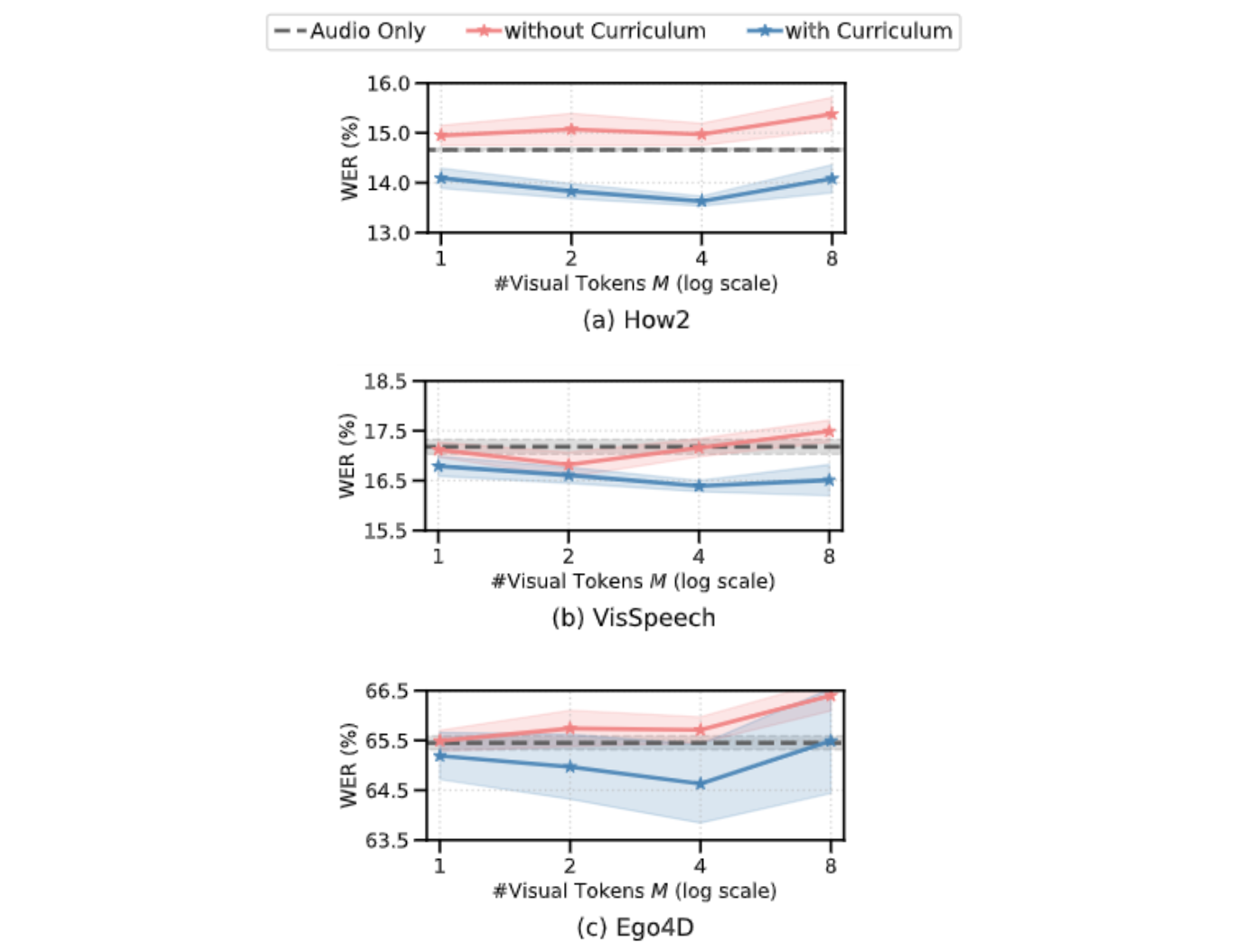

.png) |

| Comparison to state-of-the-art strategies for zero-shot efficiency throughout totally different AV-ASR datasets. We additionally present performances on LibriSpeech which is audio-only. Results are reported as WER % (decrease is healthier). AVATAR and BEST-RQ are finetuned end-to-end (all parameters) on HowTo100M whereas AVFormer works successfully even with 5% of the dataset due to the small set of finetuned parameters. |

Conclusion

We introduce AVFormer, a light-weight technique for adapting current, frozen state-of-the-art ASR models for AV-ASR. Our method is sensible and environment friendly, and achieves spectacular zero-shot efficiency. As ASR models get bigger and bigger, tuning the complete parameter set of pre-trained models turns into impractical (much more so for totally different domains). Our technique seamlessly permits each area switch and visible enter mixing in the identical, parameter environment friendly mannequin.

Acknowledgements

This analysis was carried out by Paul Hongsuck Seo, Arsha Nagrani and Cordelia Schmid.