NVIDIA Researchers launched PersonaPlex-7B-v1, a full duplex speech to speech conversational mannequin that targets pure voice interactions with exact persona management.

From ASR→LLM→TTS to a single full duplex mannequin

Conventional voice assistants normally run a cascade. Automatic Speech Recognition (ASR) converts speech to textual content, a language mannequin generates a textual content reply, and Text to Speech (TTS) converts again to audio. Each stage provides latency, and the pipeline can’t deal with overlapping speech, pure interruptions, or dense backchannels.

PersonaPlex replaces this stack with a single Transformer mannequin that performs streaming speech understanding and speech era in a single community. The mannequin operates on steady audio encoded with a neural codec and predicts each textual content tokens and audio tokens autoregressively. Incoming person audio is incrementally encoded, whereas PersonaPlex concurrently generates its personal speech, which allows barge in, overlaps, speedy flip taking, and contextual backchannels.

PersonaPlex runs in a twin stream configuration. One stream tracks person audio, the opposite stream tracks agent speech and textual content. Both streams share the identical mannequin state, so the agent can preserve listening whereas talking and can modify its response when the person interrupts. This design is immediately impressed by Kyutai’s Moshi full duplex framework.

Hybrid prompting, voice management and function management

PersonaPlex makes use of two prompts to outline the conversational id.

- The voice immediate is a sequence of audio tokens that encodes vocal traits, talking type, and prosody.

- The textual content immediate describes function, background, group data, and state of affairs context.

Together, these prompts constrain each the linguistic content material and the acoustic conduct of the agent. On high of this, a system immediate helps fields similar to identify, enterprise identify, agent identify, and enterprise data, with a finances as much as 200 tokens.

Architecture, Helium spine and audio path

The PersonaPlex mannequin has 7B parameters and follows the Moshi community structure. A Mimi speech encoder that mixes ConvNet and Transformer layers converts waveform audio into discrete tokens. Temporal and depth Transformers course of a number of channels that characterize person audio, agent textual content, and agent audio. A Mimi speech decoder that additionally combines Transformer and ConvNet layers generates the output audio tokens. Audio makes use of a 24 kHz pattern fee for each enter and output.

PersonaPlex is constructed on Moshi weights and makes use of Helium because the underlying language mannequin spine. Helium supplies semantic understanding and allows generalization exterior the supervised conversational eventualities. This is seen within the ‘area emergency’ instance, the place a immediate a couple of reactor core failure on a Mars mission results in coherent technical reasoning with applicable emotional tone, though this case isn’t a part of the coaching distribution.

Training knowledge mix, actual conversations and artificial roles

Training has 1 stage and makes use of a mix of actual and artificial dialogues.

Real conversations come from 7,303 calls, about 1,217 hours, within the Fisher English corpus. These conversations are again annotated with prompts utilizing GPT-OSS-120B. The prompts are written at completely different granularity ranges, from easy persona hints like ‘You take pleasure in having a great dialog’ to longer descriptions that embrace life historical past, location, and preferences. This corpus supplies pure backchannels, disfluencies, pauses, and emotional patterns which are tough to acquire from TTS alone.

Synthetic knowledge covers assistant and customer support roles. NVIDIA crew reviews 39,322 artificial assistant conversations, about 410 hours, and 105,410 artificial customer support conversations, about 1,840 hours. Qwen3-32B and GPT-OSS-120B generate the transcripts, and Chatterbox TTS converts them to speech. For assistant interactions, the textual content immediate is fastened as ‘You are a smart and pleasant instructor. Answer questions or present recommendation in a transparent and partaking means.’ For customer support eventualities, prompts encode group, function kind, agent identify, and structured enterprise guidelines similar to pricing, hours, and constraints.

This design lets PersonaPlex disentangle pure conversational conduct, which comes primarily from Fisher, from process adherence and function conditioning, which come primarily from artificial eventualities.

Evaluation on FullDuplexBench and ServiceDuplexBench

PersonaPlex is evaluated on FullDuplexBench, a benchmark for full duplex spoken dialogue fashions, and on a brand new extension referred to as ServiceDuplexBench for customer support eventualities.

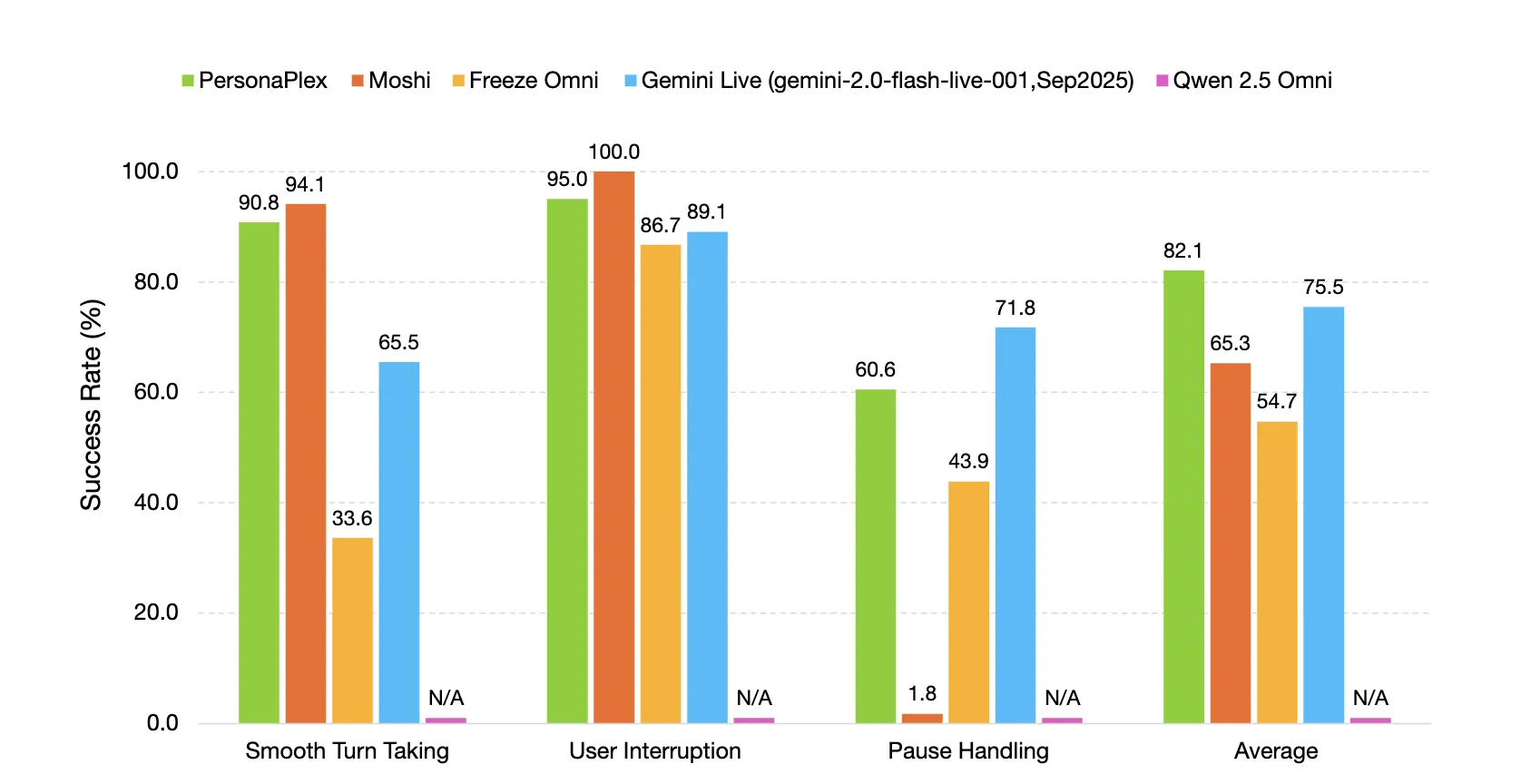

FullDuplexBench measures conversational dynamics with Takeover Rate and latency metrics for duties similar to easy flip taking, person interruption dealing with, pause dealing with, and backchanneling. GPT-4o serves as an LLM choose for response high quality in query answering classes. PersonaPlex reaches easy flip taking TOR 0.908 with latency 0.170 seconds and person interruption TOR 0.950 with latency 0.240 seconds. Speaker similarity between voice prompts and outputs on the person interruption subset makes use of WavLM TDNN embeddings and reaches 0.650.

PersonaPlex outperforms many different open supply and closed programs on conversational dynamics, response latency, interruption latency, and process adherence in each assistant and customer support roles.

Key Takeaways

- PersonaPlex-7B-v1 is a 7B parameter full duplex speech to speech conversational mannequin from NVIDIA, constructed on the Moshi structure with a Helium language mannequin spine, code beneath MIT and weights beneath the NVIDIA Open Model License.

- The mannequin makes use of a twin stream Transformer with Mimi speech encoder and decoder at 24 kHz, it encodes steady audio into discrete tokens and generates textual content and audio tokens on the similar time, which allows barge in, overlaps, quick flip taking, and pure backchannels.

- Persona management is dealt with by hybrid prompting, a voice immediate manufactured from audio tokens units timbre and type, a textual content immediate and a system immediate of as much as 200 tokens defines function, enterprise context, and constraints, with prepared made voice embeddings similar to NATF and NATM households.

- Training makes use of a mix of seven,303 Fisher conversations, about 1,217 hours, annotated with GPT-OSS-120B, plus artificial assistant and customer support dialogs, about 410 hours and 1,840 hours, generated with Qwen3-32B and GPT-OSS-120B and rendered with Chatterbox TTS, which separates conversational naturalness from process adherence.

- On FullDuplexBench and ServiceDuplexBench, PersonaPlex reaches easy flip taking takeover fee 0.908 and person interruption takeover fee 0.950 with sub second latency and improved process adherence.

Check out the Technical particulars, Model weights and Repo. Also, be happy to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you may be part of us on telegram as properly.

The publish NVIDIA Releases PersonaPlex-7B-v1: A Real-Time Speech-to-Speech Model Designed for Natural and Full-Duplex Conversations appeared first on MarkTechPost.