Prompting massive language fashions (LLMs) has change into an environment friendly learning paradigm for adapting LLMs to a brand new activity by conditioning on human-designed directions. The outstanding in-context learning (ICL) skill of LLMs additionally results in environment friendly few-shot learners that may generalize from few-shot input-label pairs. However, the predictions of LLMs are extremely delicate and even biased to the selection of templates, label areas (corresponding to sure/no, true/false, right/incorrect), and demonstration examples, leading to surprising efficiency degradation and obstacles for pursuing strong LLM functions. To handle this drawback, calibration strategies have been developed to mitigate the results of those biases whereas recovering LLM efficiency. Though a number of calibration options have been offered (e.g., contextual calibration and domain-context calibration), the sphere presently lacks a unified evaluation that systematically distinguishes and explains the distinctive traits, deserves, and downsides of every method.

With this in thoughts, in “Batch Calibration: Rethinking Calibration for In-Context Learning and Prompt Engineering”, we conduct a scientific evaluation of the present calibration strategies, the place we each present a unified view and reveal the failure circumstances. Inspired by these analyses, we suggest Batch Calibration (BC), a easy but intuitive methodology that mitigates the bias from a batch of inputs, unifies numerous prior approaches, and successfully addresses the restrictions in earlier strategies. BC is zero-shot, self-adaptive (i.e., inference-only), and incurs negligible further prices. We validate the effectiveness of BC with PaLM 2 and CLIP fashions and exhibit state-of-the-art efficiency over earlier calibration baselines throughout greater than 10 pure language understanding and picture classification duties.

Motivation

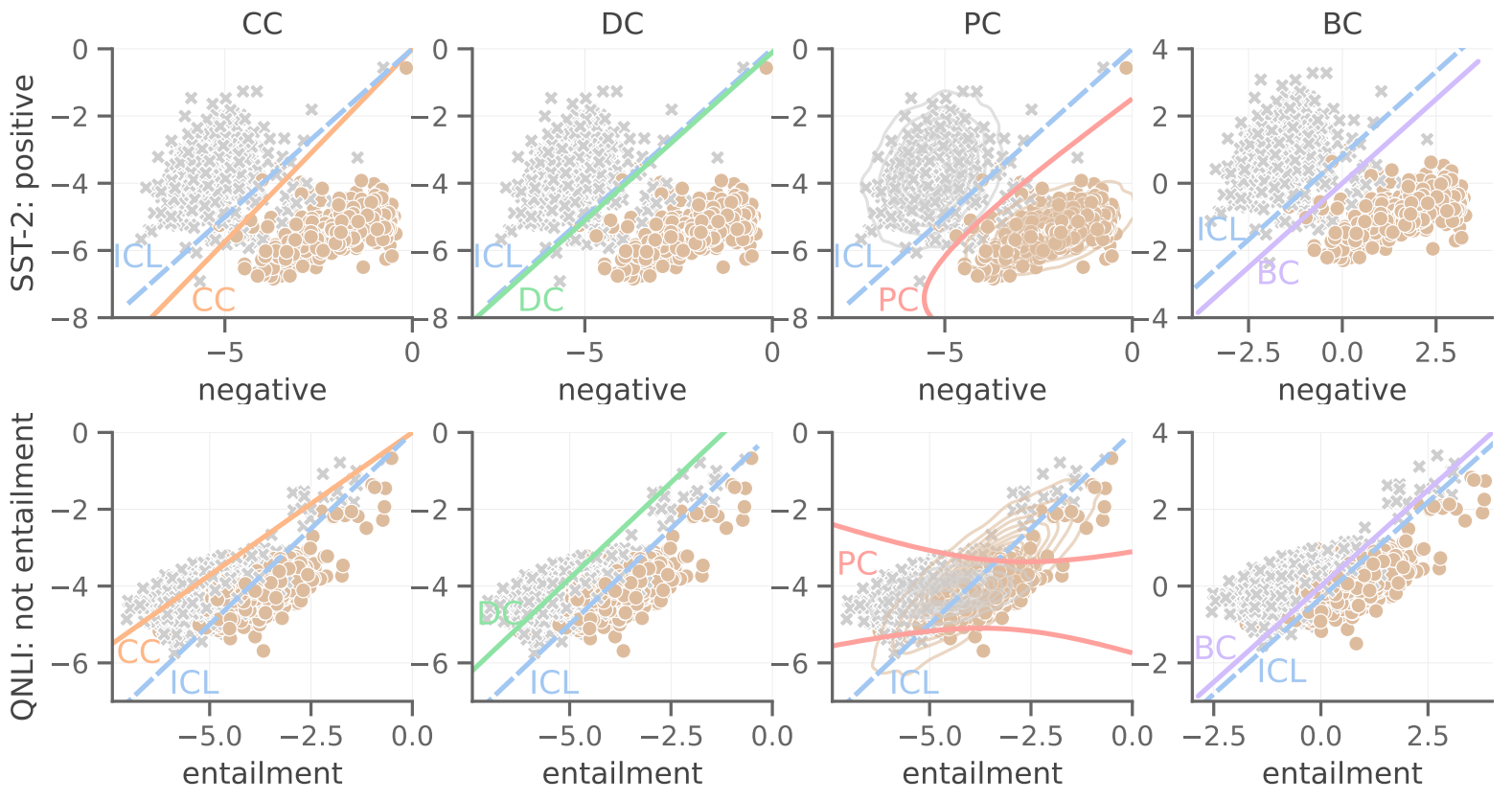

In pursuit of sensible tips for ICL calibration, we began with understanding the restrictions of present strategies. We discover that the calibration drawback will be framed as an unsupervised choice boundary learning drawback. We observe that uncalibrated ICL will be biased in the direction of predicting a category, which we explicitly check with as contextual bias, the a priori propensity of LLMs to foretell sure courses over others unfairly given the context. For instance, the prediction of LLMs will be biased in the direction of predicting essentially the most frequent label, or the label in the direction of the tip of the demonstration. We discover that, whereas theoretically extra versatile, non-linear boundaries (prototypical calibration) are usually prone to overfitting and might undergo from instability for difficult multi-class duties. Conversely, we discover that linear choice boundaries will be extra strong and generalizable throughout duties. In addition, we discover that counting on further content-free inputs (e.g., “N/A” or random in-domain tokens) because the grounds for estimating the contextual bias is not all the time optimum and might even introduce further bias, relying on the duty kind.

Batch calibration

Inspired by the earlier discussions, we designed BC to be a zero-shot, inference-only and generalizable calibration method with negligible computation value. We argue that essentially the most essential part for calibration is to precisely estimate the contextual bias. We, due to this fact, choose for a linear choice boundary for its robustness, and as an alternative of counting on content-free inputs, we suggest to estimate the contextual bias for every class from a batch in a content-based method by marginalizing the output rating over all samples inside the batch, which is equal to measuring the imply rating for every class (visualized beneath).

We then acquire the calibrated chance by dividing the output chance over the contextual prior, which is equal to aligning the log-probability (LLM scores) distribution to the estimated imply of every class. It is noteworthy that as a result of it requires no further inputs to estimate the bias, this BC process is zero-shot, solely includes unlabeled take a look at samples, and incurs negligible computation prices. We might both compute the contextual bias as soon as all take a look at samples are seen, or alternatively, in an on-the-fly method that dynamically processes the outputs. To achieve this, we might use a operating estimate of the contextual bias for BC, thereby permitting BC’s calibration time period to be estimated from a small variety of mini-batches that’s subsequently stabilized when extra mini-batches arrive.

|

| Illustration of Batch Calibration (BC). Batches of demonstrations with in-context examples and take a look at samples are handed into the LLM. Due to sources of implicit bias within the context, the rating distribution from the LLM turns into biased. BC is a modular and adaptable layer possibility appended to the output of the LLM that generates calibrated scores (visualized for illustration solely). |

Experiment design

For pure language duties, we conduct experiments on 13 extra various and difficult classification duties, together with the usual GLUE and SuperGLUE datasets. This is in distinction to earlier works that solely report on comparatively easy single-sentence classification duties.. For picture classification duties, we embody SVHN, EuroSAT, and CLEVR. We conduct experiments primarily on the state-of-the-art PaLM 2 with dimension variants PaLM 2-S, PaLM 2-M, and PaLM 2-L. For VLMs, we report the outcomes on CLIP ViT-B/16.

Results

Notably, BC persistently outperforms ICL, yielding a major efficiency enhancement of 8% and 6% on small and massive variants of PaLM 2, respectively. This exhibits that the BC implementation efficiently mitigates the contextual bias from the in-context examples and unleashes the total potential of LLM in environment friendly learning and fast adaptation to new duties. In addition, BC improves over the state-of-the-art prototypical calibration (PC) baseline by 6% on PaLM 2-S, and surpasses the aggressive contextual calibration (CC) baseline by one other 3% on common on PaLM 2-L. Specifically, BC is a generalizable and cheaper method throughout all evaluated duties, delivering secure efficiency enchancment, whereas earlier baselines exhibit various levels of efficiency throughout duties.

We analyze the efficiency of BC by various the variety of ICL pictures from 0 to 4, and BC once more outperforms all baseline strategies. We additionally observe an general development for improved efficiency when extra pictures can be found, the place BC demonstrates the most effective stability.

We additional visualize the choice boundaries of uncalibrated ICL after making use of current calibration strategies and the proposed BC. We present success and failure circumstances for every baseline methodology, whereas BC is persistently efficient.

|

| Visualization of the choice boundaries of uncalibrated ICL, and after making use of current calibration strategies and the proposed BC in consultant binary classification duties of SST-2 (prime row) and QNLI (backside row) on 1-shot PaLM 2-S. Each axis signifies the LLM rating on the outlined label. |

Robustness and ablation research

We analyze the robustness of BC with respect to frequent prompt engineering design selections that had been beforehand proven to considerably have an effect on LLM efficiency: selections and orders of in-context examples, the prompt template for ICL, and the label area. First, we discover that BC is extra strong to ICL selections and can principally obtain the identical efficiency with completely different ICL examples. Additionally, given a single set of ICL pictures, altering the order between every ICL instance has minimal affect on the BC efficiency. Furthermore, we analyze the robustness of BC underneath 10 designs of prompt templates, the place BC exhibits constant enchancment over the ICL baseline. Therefore, although BC improves efficiency, a well-designed template can additional improve the efficiency of BC. Lastly, we study the robustness of BC to variations in label area designs (see appendix in our paper). Remarkably, even when using unconventional selections corresponding to emoji pairs as labels, resulting in dramatic oscillations of ICL efficiency, BC largely recovers efficiency. This statement demonstrates that BC will increase the robustness of LLM predictions underneath frequent prompt design selections and makes prompt engineering simpler.

|

| Batch Calibration makes prompt engineering simpler whereas being data-efficient. Data are visualized as a normal field plot, which illustrates values for the median, first and third quartiles, and minimal and most. |

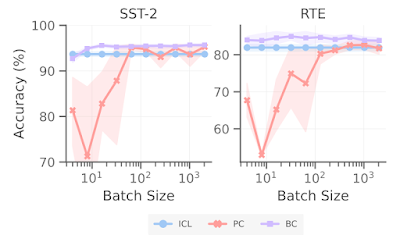

Moreover, we research the affect of batch dimension on the efficiency of BC. In distinction to PC, which additionally leverages an unlabeled estimate set, BC is remarkably extra pattern environment friendly, attaining a powerful efficiency with solely round 10 unlabeled samples, whereas PC requires greater than 500 unlabeled samples earlier than its efficiency stabilizes.

|

| Batch Calibration makes prompt engineering simpler whereas being insensitive to the batch dimension. |

Conclusion

We first revisit earlier calibration strategies whereas addressing two essential analysis questions from an interpretation of choice boundaries, revealing their failure circumstances and deficiencies. We then suggest Batch Calibration, a zero-shot and inference-only calibration method. While methodologically easy and straightforward to implement with negligible computation value, we present that BC scales from a language-only setup to the vision-language context, attaining state-of-the-art efficiency in each modalities. BC considerably improves the robustness of LLMs with respect to prompt designs, and we count on straightforward prompt engineering with BC.

Acknowledgements

This work was performed by Han Zhou, Xingchen Wan, Lev Proleev, Diana Mincu, Jilin Chen, Katherine Heller, Subhrajit Roy. We want to thank Mohammad Havaei and different colleagues at Google Research for their dialogue and suggestions.