A key function of human intelligence is that people can be taught to carry out new duties by reasoning utilizing just a few examples. Scaling up language models has unlocked a variety of latest purposes and paradigms in machine learning, together with the power to carry out difficult reasoning duties by way of in-context learning. Language models, nevertheless, are nonetheless delicate to the best way that prompts are given, indicating that they aren’t reasoning in a strong method. For occasion, language models typically require heavy immediate engineering or phrasing duties as directions, and so they exhibit surprising behaviors similar to efficiency on duties being unaffected even when proven incorrect labels.

In “Symbol tuning improves in-context learning in language models”, we suggest a easy fine-tuning process that we name image tuning, which might enhance in-context learning by emphasizing enter–label mappings. We experiment with image tuning throughout Flan-PaLM models and observe advantages throughout varied settings.

- Symbol tuning boosts efficiency on unseen in-context learning duties and is way more strong to underspecified prompts, similar to these with out directions or with out pure language labels.

- Symbol-tuned models are a lot stronger at algorithmic reasoning duties.

- Finally, symbol-tuned models present giant enhancements in following flipped-labels offered in-context, that means that they’re extra able to utilizing in-context data to override prior data.

|

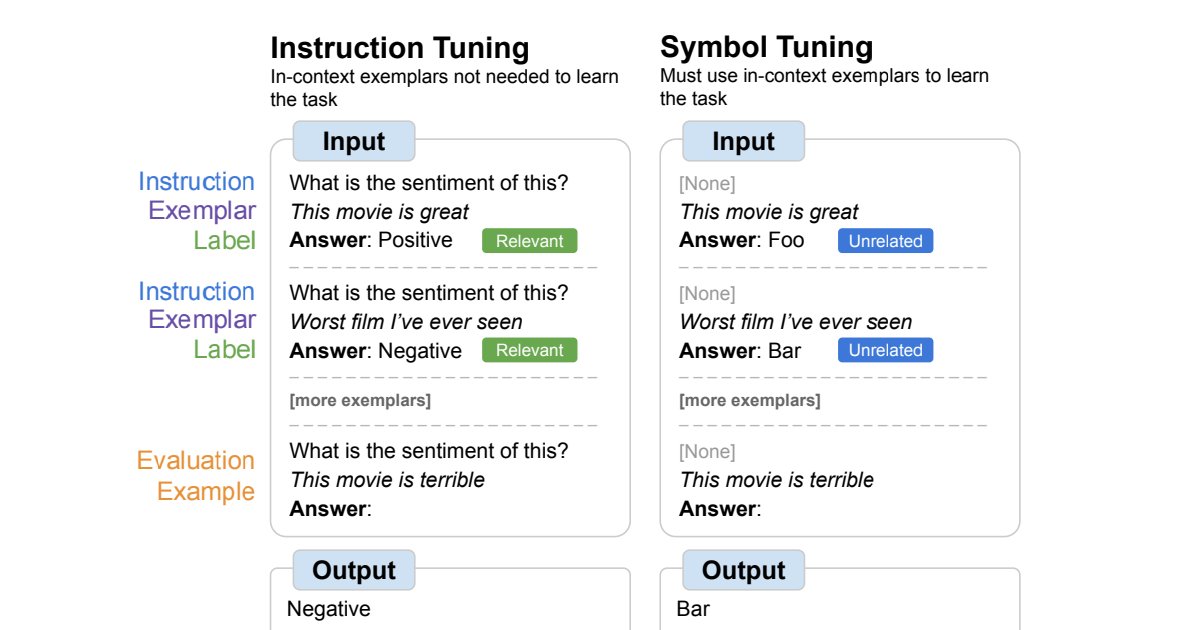

| An overview of image tuning, the place models are fine-tuned on duties the place pure language labels are changed with arbitrary symbols. Symbol tuning depends on the instinct that when instruction and related labels will not be accessible, models should use in-context examples to be taught the duty. |

Motivation

Instruction tuning is a standard fine-tuning technique that has been proven to enhance efficiency and permit models to raised comply with in-context examples. One shortcoming, nevertheless, is that models will not be pressured to be taught to make use of the examples as a result of the duty is redundantly outlined in the analysis instance by way of directions and pure language labels. For instance, on the left in the determine above, though the examples may also help the mannequin perceive the duty (sentiment evaluation), they aren’t strictly crucial because the mannequin might ignore the examples and simply learn the instruction that signifies what the duty is.

In image tuning, the mannequin is fine-tuned on examples the place the directions are eliminated and pure language labels are changed with semantically-unrelated labels (e.g., “Foo,” “Bar,” and many others.). In this setup, the duty is unclear with out wanting on the in-context examples. For instance, on the proper in the determine above, a number of in-context examples could be wanted to determine the duty. Because image tuning teaches the mannequin to motive over the in-context examples, symbol-tuned models ought to have higher efficiency on duties that require reasoning between in-context examples and their labels.

|

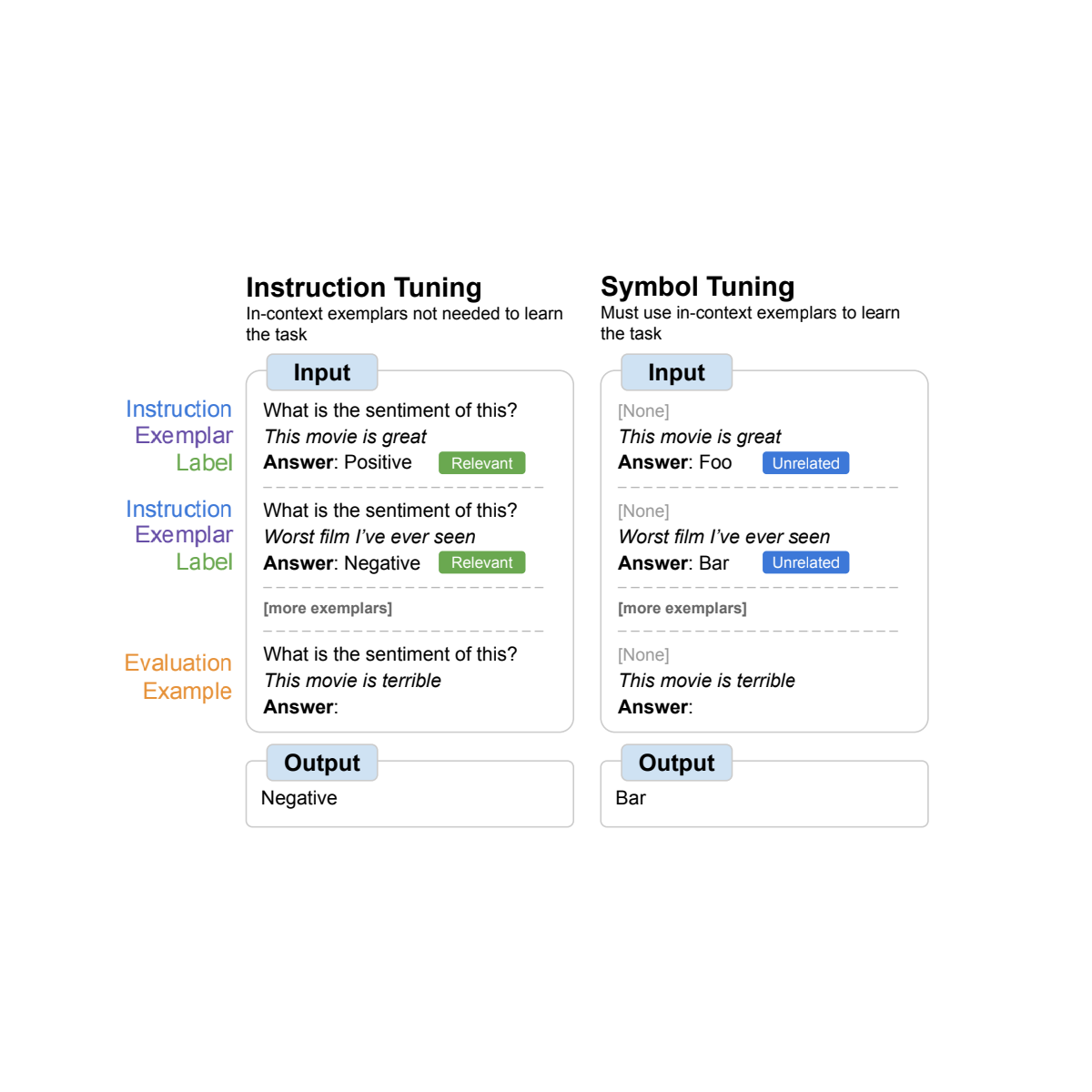

| Datasets and process sorts used for image tuning. |

Symbol-tuning process

We chosen 22 publicly-available pure language processing (NLP) datasets that we use for our symbol-tuning process. These duties have been broadly used in the previous, and we solely selected classification-type duties since our technique requires discrete labels. We then remap labels to a random label from a set of ~30K arbitrary labels chosen from certainly one of three classes: integers, character mixtures, and phrases.

For our experiments, we image tune Flan-PaLM, the instruction-tuned variants of PaLM. We use three completely different sizes of Flan-PaLM models: Flan-PaLM-8B, Flan-PaLM-62B, and Flan-PaLM-540B. We additionally examined Flan-cont-PaLM-62B (Flan-PaLM-62B at 1.3T tokens as an alternative of 780B tokens), which we abbreviate as 62B-c.

|

| We use a set of ∼300K arbitrary symbols from three classes (integers, character mixtures, and phrases). ∼30K symbols are used throughout tuning and the remaining are held out for analysis. |

Experimental setup

We wish to consider a mannequin’s skill to carry out unseen duties, so we can’t consider on duties used in image tuning (22 datasets) or used throughout instruction tuning (1.8K duties). Hence, we select 11 NLP datasets that weren’t used throughout fine-tuning.

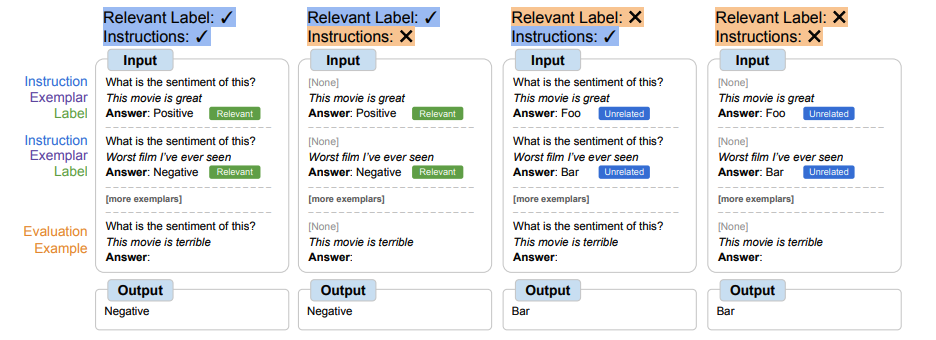

In-context learning

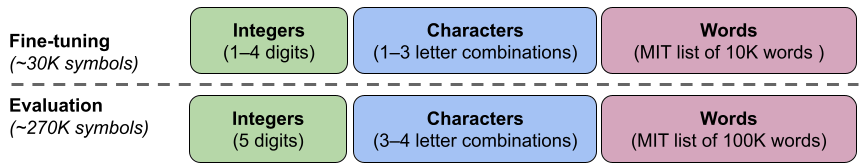

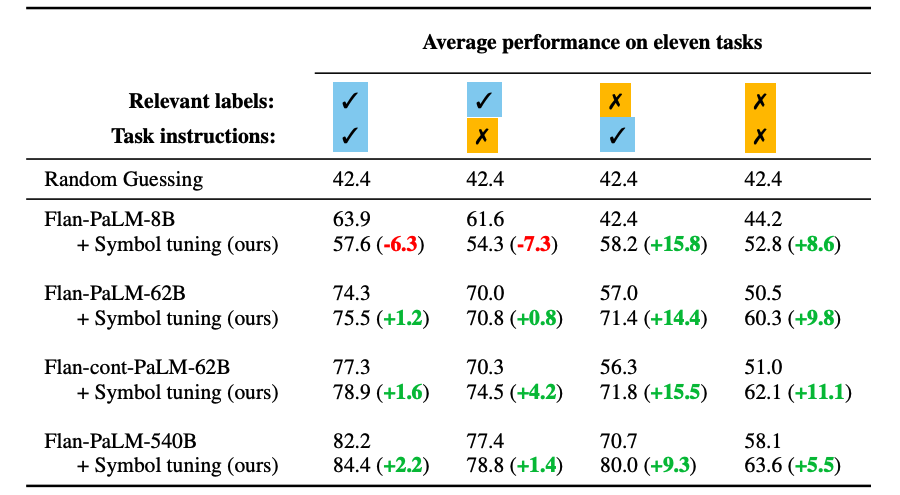

In the symbol-tuning process, models should be taught to motive with in-context examples in order to efficiently carry out duties as a result of prompts are modified to make sure that duties can’t merely be discovered from related labels or directions. Symbol-tuned models ought to carry out higher in settings the place duties are unclear and require reasoning between in-context examples and their labels. To discover these settings, we outline 4 in-context learning settings that change the quantity of reasoning required between inputs and labels in order to be taught the duty (based mostly on the provision of directions/related labels)

|

| Depending on the provision of directions and related pure language labels, models could have to do various quantities of reasoning with in-context examples. When these options will not be accessible, models should motive with the given in-context examples to efficiently carry out the duty. |

Symbol tuning improves efficiency throughout all settings for models 62B and bigger, with small enhancements in settings with related pure language labels (+0.8% to +4.2%) and substantial enhancements in settings with out related pure language labels (+5.5% to +15.5%). Strikingly, when related labels are unavailable, symbol-tuned Flan-PaLM-8B outperforms FlanPaLM-62B, and symbol-tuned Flan-PaLM-62B outperforms Flan-PaLM-540B. This efficiency distinction means that image tuning can permit a lot smaller models to carry out in addition to giant models on these duties (successfully saving ∼10X inference compute).

|

| Large-enough symbol-tuned models are higher at in-context learning than baselines, particularly in settings the place related labels will not be accessible. Performance is proven as common mannequin accuracy (%) throughout eleven duties. |

Algorithmic reasoning

We additionally experiment on algorithmic reasoning duties from BIG-Bench. There are two primary teams of duties: 1) List features — establish a change operate (e.g., take away the final factor in an inventory) between enter and output lists containing non-negative integers; and a pair of) easy turing ideas — motive with binary strings to be taught the idea that maps an enter to an output (e.g., swapping 0s and 1s in a string).

On the record operate and easy turing idea duties, image tuning outcomes in a mean efficiency enchancment of 18.2% and 15.3%, respectively. Additionally, Flan-cont-PaLM-62B with image tuning outperforms Flan-PaLM-540B on the record operate duties on common, which is equal to a ∼10x discount in inference compute. These enhancements counsel that image tuning strengthens the mannequin’s skill to be taught in-context for unseen process sorts, as image tuning didn’t embrace any algorithmic information.

|

| Symbol-tuned models obtain greater efficiency on record operate duties and easy turing idea duties. (A–E): classes of record features duties. (F): easy turing ideas process. |

Flipped labels

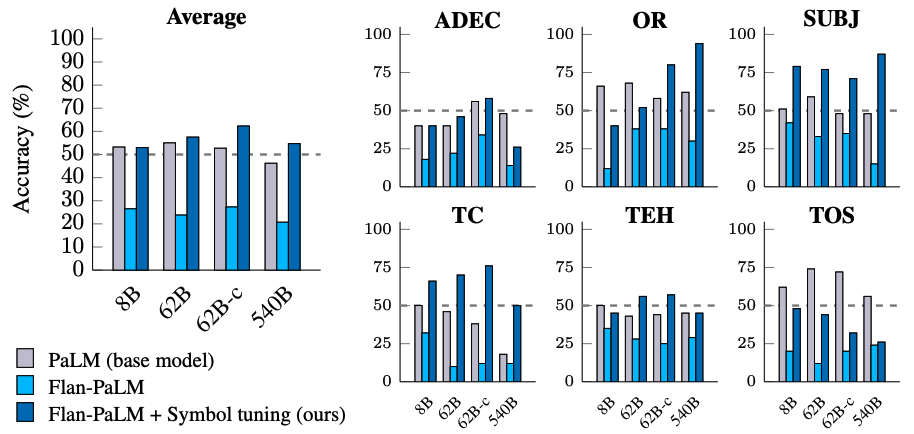

In the flipped-label experiment, labels of in-context and analysis examples are flipped, that means that prior data and input-label mappings disagree (e.g., sentences containing constructive sentiment labeled as “negative sentiment”), thereby permitting us to check whether or not models can override prior data. Previous work has proven that whereas pre-trained models (with out instruction tuning) can, to some extent, comply with flipped labels offered in-context, instruction tuning degraded this skill.

We see that there’s a related development throughout all mannequin sizes — symbol-tuned models are way more able to following flipped labels than instruction-tuned models. We discovered that after image tuning, Flan-PaLM-8B sees a mean enchancment throughout all datasets of 26.5%, Flan-PaLM-62B sees an enchancment of 33.7%, and Flan-PaLM-540B sees an enchancment of 34.0%. Additionally, symbol-tuned models obtain related or higher than common efficiency as pre-training–solely models.

|

| Symbol-tuned models are a lot better at following flipped labels offered in-context than instruction-tuned models are. |

Conclusion

We offered image tuning, a brand new technique of tuning models on duties the place pure language labels are remapped to arbitrary symbols. Symbol tuning relies off of the instinct that when models can’t use directions or related labels to find out a offered process, it should accomplish that by as an alternative learning from in-context examples. We tuned 4 language models utilizing our symbol-tuning process, using a tuning combination of twenty-two datasets and roughly 30K arbitrary symbols as labels.

We first confirmed that image tuning improves efficiency on unseen in-context learning duties, particularly when prompts don’t include directions or related labels. We additionally discovered that symbol-tuned models had been a lot better at algorithmic reasoning duties, regardless of the dearth of numerical or algorithmic information in the symbol-tuning process. Finally, in an in-context learning setting the place inputs have flipped labels, image tuning (for some datasets) restores the power to comply with flipped labels that was misplaced throughout instruction tuning.

Future work

Through image tuning, we purpose to extend the diploma to which models can study and be taught from enter–label mappings throughout in-context learning. We hope that our outcomes encourage additional work in the direction of bettering language models’ skill to motive over symbols offered in-context.

Acknowledgements

The authors of this publish are actually a part of Google DeepMind. This work was carried out by Jerry Wei, Le Hou, Andrew Lampinen, Xiangning Chen, Da Huang, Yi Tay, Xinyun Chen, Yifeng Lu, Denny Zhou, Tengyu Ma, and Quoc V. Le. We wish to thank our colleagues at Google Research and Google DeepMind for his or her recommendation and useful discussions.