Tencent Hunyuan’s 3D Digital Human workforce has launched HY-Motion 1.0, an open weight text-to-3D human movement technology household that scales Diffusion Transformer primarily based Flow Matching to 1B parameters in the movement area. The fashions flip pure language prompts plus an anticipated length into 3D human movement clips on a unified SMPL-H skeleton and can be found on GitHub and Hugging Face with code, checkpoints and a Gradio interface for native use.

What HY-Motion 1.0 gives for builders?

HY-Motion 1.0 is a sequence of text-to-3D human movement technology fashions constructed on a Diffusion Transformer, DiT, skilled with a Flow Matching goal. The mannequin sequence showcases 2 variants, HY-Motion-1.0 with 1.0B parameters as the customary mannequin and HY-Motion-1.0-Lite with 0.46B parameters as a light-weight possibility.

Both fashions generate skeleton primarily based 3D character animations from easy textual content prompts. The output is a movement sequence on an SMPL-H skeleton that may be built-in into 3D animation or recreation pipelines, for instance for digital people, cinematics and interactive characters. The launch consists of inference scripts, a batch oriented CLI and a Gradio internet app, and helps macOS, Windows and Linux.

Data engine and taxonomy

The coaching information comes from 3 sources, in the wild human movement movies, movement seize information and 3D animation property for recreation manufacturing. The analysis workforce begins from 12M prime quality video clips from HunyuanVideo, runs shot boundary detection to separate scenes and a human detector to maintain clips with folks, then applies the GVHMR algorithm to reconstruct SMPL X movement tracks. Motion seize classes and 3D animation libraries contribute about 500 hours of extra movement sequences.

All information is retargeted onto a unified SMPL-H skeleton via mesh becoming and retargeting instruments. A multi stage filter removes duplicate clips, irregular poses, outliers in joint velocity, anomalous displacements, lengthy static segments and artifacts resembling foot sliding. Motions are then canonicalized, resampled to 30 fps and segmented into clips shorter than 12 seconds with a set world body, Y axis up and the character dealing with the constructive Z axis. The ultimate corpus comprises over 3,000 hours of movement, of which 400 hours are prime quality 3D movement with verified captions.

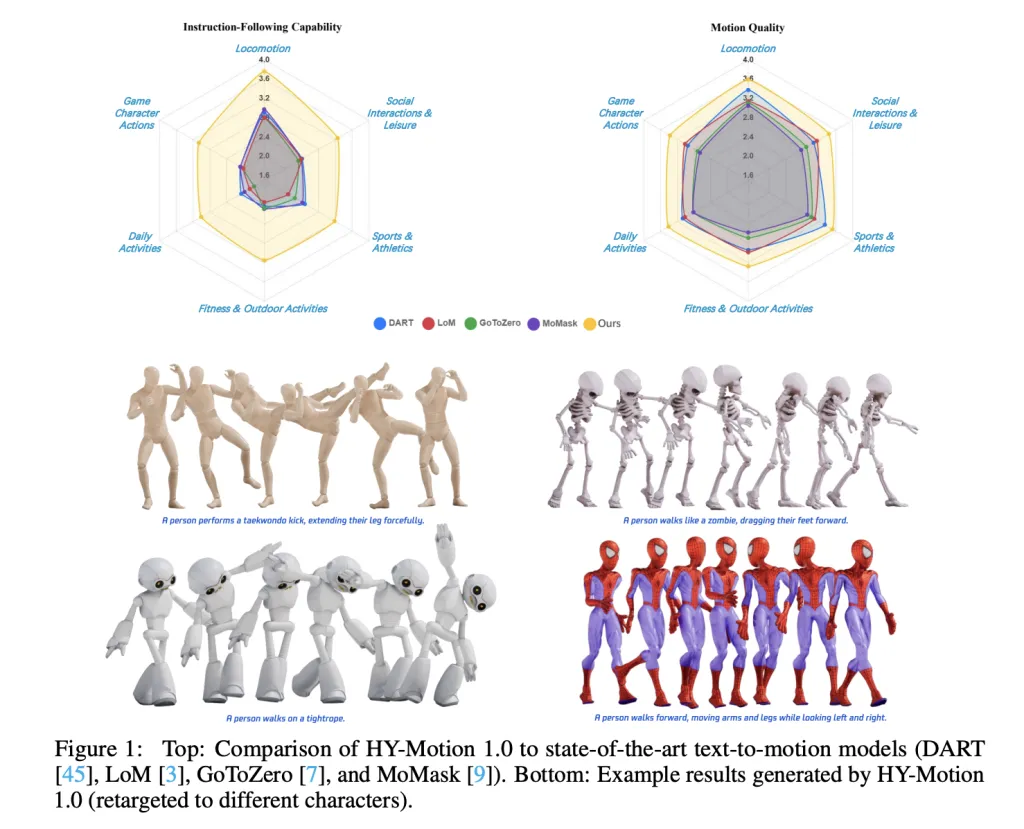

On high of this, the analysis workforce defines a 3 stage taxonomy. At the high stage there are 6 courses, Locomotion, Sports and Athletics, Fitness and Outdoor Activities, Daily Activities, Social Interactions and Leisure and Game Character Actions. These broaden into greater than 200 high-quality grained movement classes at the leaves, which cowl each easy atomic actions and concurrent or sequential movement combos.

Motion illustration and HY-Motion DiT

HY-Motion 1.0 makes use of the SMPL-H skeleton with 22 physique joints with out fingers. Each body is a 201 dimensional vector that concatenates international root translation in 3D area, international physique orientation in a steady 6D rotation illustration, 21 native joint rotations in 6D kind and 22 native joint positions in 3D coordinates. Velocities and foot contact labels are eliminated as a result of they slowed coaching and didn’t assist ultimate high quality. This illustration is appropriate with animation workflows and near the DART mannequin illustration.

The core community is a hybrid HY Motion DiT. It first applies twin stream blocks that course of movement latents and textual content tokens individually. In these blocks, every modality has its personal QKV projections and MLP, and a joint consideration module permits movement tokens to question semantic options from textual content tokens whereas conserving modality particular construction. The community then switches to single stream blocks that concatenate movement and textual content tokens into one sequence and course of them with parallel spatial and channel consideration modules to carry out deeper multimodal fusion.

For textual content conditioning, the system makes use of a twin encoder scheme. Qwen3 8B gives token stage embeddings, whereas a CLIP-L mannequin gives international textual content options. A Bidirectional Token Refiner fixes the causal consideration bias of the LLM for non autoregressive technology. These indicators feed the DiT via adaptive layer normalization conditioning. Attention is uneven, movement tokens can attend to all textual content tokens, however textual content tokens don’t attend again to movement, which prevents noisy movement states from corrupting the language illustration. Temporal consideration inside the movement department makes use of a slim sliding window of 121 frames, which focuses capability on native kinematics whereas conserving price manageable for lengthy clips. Full Rotary Position Embedding is utilized after concatenating textual content and movement tokens to encode relative positions throughout the complete sequence.

Flow Matching, immediate rewriting and coaching

HY-Motion 1.0 makes use of Flow Matching as an alternative of ordinary denoising diffusion. The mannequin learns a velocity subject alongside a steady path that interpolates between Gaussian noise and actual movement information. During coaching, the goal is a imply squared error between predicted and floor reality velocities alongside this path. During inference, the discovered peculiar differential equation is built-in from noise to a clear trajectory, which supplies secure coaching for lengthy sequences and matches the DiT structure.

A separate Duration Prediction and Prompt Rewrite module improves instruction following. It makes use of Qwen3 30B A3B as the base mannequin and is skilled on artificial person type prompts generated from movement captions with a VLM and LLM pipeline, for instance Gemini 2.5 Pro. This module predicts an appropriate movement length and rewrites casual prompts into normalized textual content that’s simpler for the DiT to comply with. It is skilled first with supervised high-quality tuning and then refined with Group Relative Policy Optimization, utilizing Qwen3 235B A22B as a reward mannequin that scores semantic consistency and length plausibility.

Training follows a 3 stage curriculum. Stage 1 performs massive scale pretraining on the full 3,000 hour dataset to study a broad movement prior and fundamental textual content movement alignment. Stage 2 high-quality tunes on the 400 hour prime quality set to sharpen movement element and enhance semantic correctness with a smaller studying fee. Stage 3 applies reinforcement studying, first Direct Preference Optimization utilizing 9,228 curated human choice pairs sampled from about 40,000 generated pairs, then Flow GRPO with a composite reward. The reward combines a semantic rating from a Text Motion Retrieval mannequin and a physics rating that penalizes artifacts like foot sliding and root drift, underneath a KL regularization time period to remain near the supervised mannequin.

Benchmarks, scaling conduct and limitations

For analysis, the workforce builds a check set of over 2,000 prompts that span the 6 taxonomy classes and embrace easy, concurrent and sequential actions. Human raters rating instruction following and movement high quality on a scale from 1 to five. HY-Motion 1.0 reaches a median instruction following rating of three.24 and an SSAE rating of 78.6 p.c. Baseline text-to-motion methods resembling DART, LoM, GoToZero and MoMask obtain scores between 2.17 and 2.31 with SSAE between 42.7 p.c and 58.0 p.c. For movement high quality, HY-Motion 1.0 reaches 3.43 on common versus 3.11 for the finest baseline.

Scaling experiments examine DiT fashions with 0.05B, 0.46B, 0.46B skilled solely on 400 hours and 1B parameters. Instruction following improves steadily with mannequin measurement, with the 1B mannequin reaching a median of three.34. Motion high quality saturates round the 0.46B scale, the place the 0.46B and 1B fashions attain related averages between 3.26 and 3.34. Comparison of the 0.46B mannequin skilled on 3,000 hours and the 0.46B mannequin skilled solely on 400 hours exhibits that bigger information quantity is essential for instruction alignment, whereas prime quality curation primarily improves realism.

Key Takeaways

- Billion scale DiT Flow Matching for movement: HY-Motion 1.0 is the first Diffusion Transformer primarily based Flow Matching mannequin scaled to the 1B parameter stage particularly for textual content to 3D human movement, concentrating on excessive constancy instruction following throughout numerous actions.

- Large scale, curated movement corpus: The mannequin is pretrained on over 3,000 hours of reconstructed, mocap and animation movement information and high-quality tuned on a 400 hour prime quality subset, all retargeted to a unified SMPL H skeleton and organized into greater than 200 movement classes.

- Hybrid DiT structure with robust textual content conditioning: HY-Motion 1.0 makes use of a hybrid twin stream and single stream DiT with uneven consideration, slim band temporal consideration and twin textual content encoders, Qwen3 8B and CLIP L, to fuse token stage and international semantics into movement trajectories.

- RL aligned immediate rewrite and coaching pipeline: A devoted Qwen3 30B primarily based module predicts movement length and rewrites person prompts, and the DiT is additional aligned with Direct Preference Optimization and Flow GRPO utilizing semantic and physics rewards, which improves realism and instruction following past supervised coaching.

Check out the Paper and Full Codes right here. Also, be happy to comply with us on Twitter and don’t neglect to hitch our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you may be a part of us on telegram as effectively.

ZTOOG